From AI strategy to working proof of concept: how senior leaders get to real AI value in 2–3 months

Why most enterprise AI initiatives stall before they start

The question senior leaders are asking has changed.

When ChatGPT first launched, the question in the boardroom was can we even use this safely? Legal said no. Security said no. The tools were forbidden inside most enterprises. That phase is over. The question now is sharper: how do we create real business value with AI?

It sounds like a small shift. It is not. It is the difference between an experiment and a strategy. And it is where most enterprise AI investments stall.

Finding the right AI opportunities at the organizational level is harder than it looks. Individuals can experiment with ChatGPT. That tells you very little about whether your organization is ready to deliver real AI projects. The activity at the user level rarely translates into impact at the company level — and the gap between the two is where AI strategies go to die.

This article is the blueprint we use with senior leaders to close that gap. It comes from a joint webinar with Gianluca Mauro, founder of AI Academy, and the team at Design Sprint Academy.

What is AI readiness, and what dimensions should leaders assess?

AI readiness is a multi-dimensional assessment of whether an organization can successfully launch and scale AI initiatives. Most leaders treat it as a technology question. It is not. It is a strategic, organizational, and ethical question with a technology layer inside it.

There are seven dimensions worth assessing before any AI investment decision:

- Strategy — Are AI initiatives aligned with organizational goals and objectives? Does a clear AI strategy exist, or is the organization reacting to vendor pitches?

- Process and governance — Are the right processes in place? Are the people accountable for running them named? Are decision rights clear?

- People and talent — Does the workforce have the skills to use AI tools well and to run AI projects? Is there a development plan, or are individuals fending for themselves?

- Data — Is the right data available, accessible, and of usable quality? This is often the silent blocker.

- Technology — Is the infrastructure in place? Can AI systems integrate with existing systems without two-year IT projects?

- Culture — Is the organization ready to use AI, or are there unaddressed objections inside the workforce that will quietly kill adoption?

- Ethics — Are ethical guidelines defined so AI cannot be misused, intentionally or not?

Most leaders are not aware they need to assess all seven dimensions before committing budget. The result is a familiar pattern: a strong AI vendor pitch, an enthusiastic pilot, and an investment that runs into a wall that was always there — just unseen.

Why senior stakeholders disagree about where AI should be applied

In most enterprises, the people in the room when AI strategy is decided come from radically different functions — and each one has a different priority for AI.

- Finance wants fraud detection, risk modeling, personalized financial advice, customer service support.

- Sales and marketing wants forecasting, CRM intelligence, automated campaign optimization.

- Legal wants contract review, compliance monitoring, regulatory analysis.

- Supply chain wants demand forecasting, predictive maintenance, logistics optimization, supplier management.

- Operations wants workflow automation, anomaly detection, throughput improvement.

All of these are legitimate AI use cases. None of them can be the answer for the whole organization. When leadership asks, let's do AI, the implicit follow-up — where, and for what? — is rarely answered explicitly. Each function pulls in a different direction. The result is fragmentation: scattered pilots, no shared definition of success, and no compounding learning.

Closing this gap requires structured alignment, not a vendor demo. That is what the AI Opportunity Mapping Workshop exists to produce.

What is the AI Opportunity Mapping Workshop?

The AI Opportunity Mapping Workshop is a structured one-day session (typically run as two half-days) designed to help senior leaders educate themselves on AI, identify high-value opportunities specific to their organization, and prioritize where to invest first.

It is co-facilitated by Design Sprint Academy and AI Academy. Design Sprint Academy brings the strategic alignment method. AI Academy brings deep technical expertise on what AI can actually do.

The workshop has three goals:

Goal 1: Educate

Senior leaders need to take direction-setting decisions on a transformative technology. They cannot do that without a working understanding of what AI is, what it can do today, and what is hype. This block uses real case studies from comparable organizations to build a foundation.

McKinsey research found that high-performing AI organizations have a substantially higher percentage of senior leaders who clearly understand how generative AI creates business value. The correlation is not surprising. The honesty about how few enterprises have invested in this foundation is.

There is a second benefit leaders rarely discuss until afterwards: confidence. After the education block, executives report being able to engage with AI vendors, internal stakeholders, and external peers in a substantively different way. They stop nodding politely at AI claims and start interrogating them.

Goal 2: Ideate

Knowledge without application is wasted. Once leaders understand what AI can do, the room moves to what does this mean for our business? For each capability discussed, the team brainstorms specific opportunities for their own organization, business units, and functions.

This stage produces a long list of potential AI ideas grounded in the company's actual context, not generic vendor categories.

Goal 3: Strategize

Ideas without prioritization are noise. The final block applies a structured prioritization to the long list — weighing each opportunity against business value, technical feasibility, data readiness, and the strategic fit specific to the organization.

The output is a short, prioritized portfolio of AI opportunities ready to enter the validation phase. Critically, it includes both what to start now and what to defer — saving the organization from spreading thin across dozens of pilots.

McKinsey's research again finds that high-performing AI organizations have a clear advantage in identifying the right project for today versus the right project for later. This is the muscle the workshop is designed to build.

What does the research say about where AI works — and where it backfires?

A Harvard Business School / Boston Consulting Group study tested what happens when knowledge workers use AI for tasks inside what the researchers call the frontier of AI capability — versus tasks outside that frontier.

The findings are unusually clear.

Inside the frontier (right use cases):

- Lower-skilled workers improved performance by 43%.

- Higher-skilled workers improved performance by 17%.

- Both groups produced substantively better work.

Outside the frontier (wrong use cases):

- Recommendation quality dropped.

- Workers using AI were 21% more likely to recommend the wrong answer than workers not using AI on the same task.

The lesson is not that AI works or does not work. The lesson is that AI deployed against the right use cases creates significant business value, and AI deployed against the wrong use cases actively destroys value. Choosing well matters more than choosing fast.

This is why opportunity mapping is the foundation of AI strategy. The cost of building the wrong AI product is not just the wasted investment — it is the negative performance impact on the people who use it.

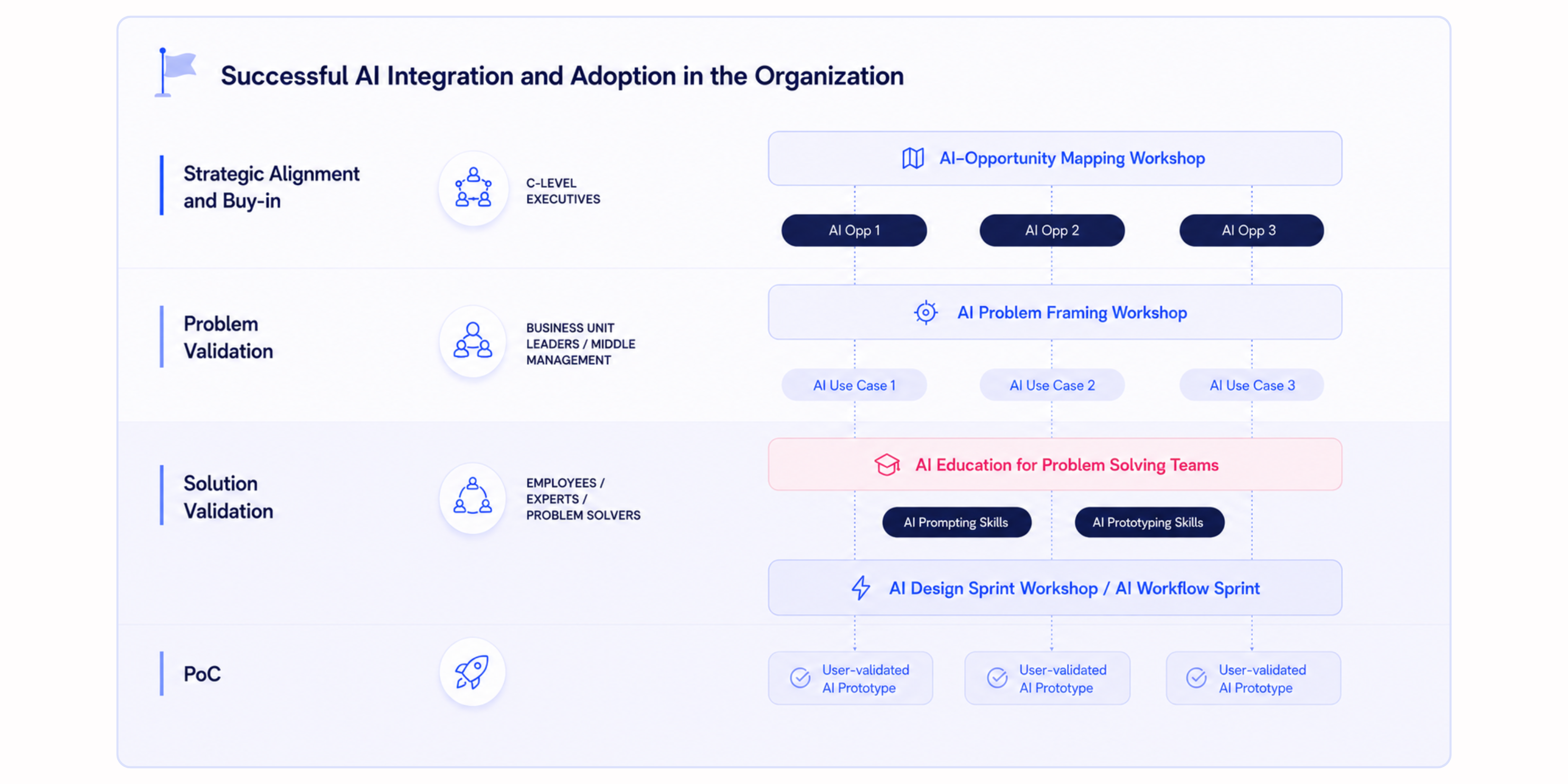

What is the three-stage blueprint for launching AI in an enterprise?

A three-stage process that takes an organization from no AI strategy to working, validated AI proof of concepts in two to three months. Each stage involves the right level of the organization at the right moment.

Stage 1: Strategic alignment (Leadership)

Who is in the room: C-level executives, senior decision-makers, AI investment owners.

The output: A prioritized portfolio of broad AI opportunity areas, agreed upon by leadership.

The method: AI Opportunity Mapping Workshop — educate, ideate, strategize.

Why it has to be first: AI is transformative. Direction has to come from the level of the organization that owns transformation. Bottom-up adoption (rolling ChatGPT licenses out to staff) creates activity, not strategy.

Stage 2: Problem validation (Middle management)

Who is in the room: Directors, heads of business units, domain experts within the prioritized opportunity area.

The output: Clear, validated AI problem statements — specific use cases worth solving.

The method: AI Problem Framing — a one-day strategic workshop that surfaces the right problem inside a chosen opportunity area. The team examines the problem from multiple frames: business value, customer or user pain, and operational context. It maps the territory using customer journeys or process blueprints to surface blind spots.

Why this stage exists: AI is a tool. A powerful one, but a tool. If it is not pointed at a real business or customer problem, the technology is irrelevant. Problem Framing makes sure the team commits to a specific, validated problem before anyone touches a prototype.

Stage 3: Solution validation (Cross-functional teams)

Who is in the room: Domain experts paired with AI specialists — the people closest to the problem and the people who can build.

The output: Working AI proof of concepts validated with real users.

The method: AI Workflow Sprint — a focused multi-day workshop that takes a validated problem statement and produces a tested prototype. Day one aligns on the problem and defines success. Day two generates and selects solutions. Day three builds a working prototype. Day four tests it with real users.

The difference from a traditional Design Sprint is the prototype itself. In an AI Workflow Sprint, the team is not building a clickable mockup — they are building a working AI proof of concept that can actually run.

How fast can you build a real AI proof of concept?

Faster than most enterprise IT processes assume. With the right problem framing, the right tools, and the right people in the room, a working AI proof of concept can be built in hours.

A real example: a German out-of-home advertising company that manages billboards across the country. Their problem was that clients regularly submitted poor poster designs that limited campaign effectiveness. The company wanted to give clients automated feedback on design quality — but graphic design was not their core business and they were not staffed to deliver consulting at scale.

In a short innovation workshop with AI Academy, two key insights emerged:

- The company already had a foundational asset: a published set of 10 golden rules for poster design.

- GPT-4 Vision — then a brand-new capability — could analyze images against rule sets defined in natural language.

Within roughly four hours, the team had a working proof of concept: a prompt that took the 10 golden rules as instructions, accepted a poster image as input, and returned a structured assessment of which rules were met or violated. They tested it against intentionally bad designs to verify it caught real problems.

The next steps after the workshop:

- Rigorous prompt engineering — not prompt crafting, but real reliability testing — to make sure the prompt produced consistent, accurate output.

- A no-code prototype using tools like Make and Zapier: clients sent a poster image to an email address, an automation triggered the AI, and a report came back in roughly five minutes.

- Customer testing for usability and clarity.

- Then, and only then, the data security, compliance, and IT integration work needed for production.

The sequence matters. Most enterprise IT processes start at the security and compliance stage and move forward only if the project survives. That sequence kills most AI projects before anyone has tested whether the idea actually works for users. Reversing the order — prove the value, then secure it — is what makes proof of concepts in days rather than years possible.

The project went from internal workshop to public press release in roughly six months. The technology that made it possible (GPT-4 Vision) had been released the week before the workshop. Organizations that move at this speed compound an advantage. Organizations that wait six months for an internal procurement process to approve a vendor pilot do not.

Why does AI need to start small but aim big?

Because an AI proof of concept that has no path to scale is innovation theater — the AI version of the same pattern that has been killing corporate innovation programs for decades.

The rule is simple: the first version of an AI product should be the smallest useful thing, not a single component that is useless on its own. A car's MVP is not a wheel. It is a skateboard — something a person can actually use to get from A to B, primitive but complete.

For enterprise AI projects, this means:

- The first proof of concept should solve a real problem for real users from day one.

- It should produce visible value early enough to build internal stakeholder buy-in.

- It should be designed with the road map to scale in mind, even if scaling does not happen immediately.

A lot of enterprise AI programs fail because they do one or the other — they build a tiny POC with no scaling path, or they try to build the full version and ship nothing for two years. The discipline of starting small while aiming big is what separates AI programs that compound from AI programs that produce slide decks.

Why is the right people, right stage sequence non-negotiable?

Because AI strategy fails when the wrong level of the organization is asked to make the wrong kind of decision.

This is the sequence that works:

- Senior leaders decide the strategic direction. They choose which broad opportunity areas matter for the business. They are not picking specific use cases or evaluating prompts — they are picking territory.

- Middle management and domain experts take a chosen territory and identify the specific problems worth solving inside it. They are close enough to the work to know which problems are real, which are political, and which are urgent.

- Cross-functional execution teams — designers, engineers, business owners, AI specialists — take a validated problem and produce a working solution.

When this sequence is broken, the failure modes are predictable:

- Senior leaders skipping their stage → no strategic direction; teams build cool things that do not matter.

- Middle management skipping their stage → wrong problem definition; teams build well-engineered solutions to problems no one has.

- Execution teams skipping their stage → no working proof; the strategy stays theoretical and stakeholders lose faith.

Most enterprise AI programs break this sequence at least once. Bottom-up rollouts — ChatGPT licenses for everyone, with no leadership direction — are the most common version of the break.

They produce activity. They rarely produce strategy.

Where to start

If you are responsible for AI inside your organization and the program has not produced what the board expected, the diagnosis is rarely a tooling problem. The pattern is almost always the same: strong activity at the user level, no structured strategy at the leadership level, and no system to convert one into the other.

The first move is alignment. Not another vendor demo. Not another set of pilots. A structured session that brings senior leaders into the same room, builds a shared understanding of what AI can actually do, and produces a prioritized portfolio of opportunities specific to your business.

From there the path is clear: validate the problem, validate the solution, then scale. Two to three months from start to working proof of concept. The companies that compound an AI advantage are the ones that walked this path early. The window for being early is not closed yet — but it is narrowing.

.png)

.png)