Everyone claims to be an AI Facilitator. Here's what actually separates them.

If you are considering hiring an AI Facilitator — whether as a full-time role inside your organisation or as an external expert brought in to work on a specific business challenge — you will quickly discover that the search is harder than it should be.

Not because good AI Facilitators don't exist. But because the facilitation profession itself has no single identity — and that makes it genuinely difficult to tell who you're actually looking at.

The first thing to get clear: teaching AI is not facilitating AI

A significant number of people calling themselves AI Facilitators are, more precisely, AI trainers. They run sessions on how to use AI tools — prompt engineering, Copilot onboarding, ChatGPT literacy, responsible AI awareness. They teach individuals and teams to use AI more effectively in their day-to-day work. That is valuable. It is also a completely different job.

An AI trainer's output is individual capability. Someone attends the session and leaves knowing how to do something they couldn't do before. The learning is personal. The transfer happens person by person.

An AI Facilitator's output is an organisational decision. A cross-functional team enters a structured session with a specific AI challenge and leaves with a concrete, documented decision — a validated use case, a tested prototype, a build or stop call grounded in evidence. The output is not a skill. It is a deliverable the organisation can act on.

These are not variations of the same role. They require different capabilities, different methods, and produce entirely different results. Hiring an AI trainer when you need an AI Facilitator is one of the most common — and expensive — mistakes organisations make when building an AI practice. You end up with a more AI-literate workforce and no structured way to decide what to build with AI.

When you see the title AI Facilitator, the first question to ask is simple: what does a session with you produce? A capability your people take home individually — or a decision your organisation can act on?

Why choosing the right facilitator is harder than it looks

Facilitation has evolved significantly over the past decade. According to the 2026 SessionLab State of Facilitation report, practitioners today identify themselves in radically different ways: as consultants, trainers, L&D professionals, or strategic partners who navigate organisations through complexity. That range of self-description reflects a vast range of capability — but it also creates a genuine visibility problem for you as a buyer.

When you search for an AI Facilitator, you will encounter people who present themselves very differently.

- Some lead with skills: the ability to listen, stay neutral, manage conflict, and hold a room through difficult moments.

- Some lead with charisma, energy and engagement: psychological safety, participation design, keeping groups moving.

- Some lead with outcomes: decisions made, use cases validated, initiatives stopped before they became expensive.

- Some lead with methods and certifications.

All of these things matter.

But they matter in a specific relationship to each other — and getting that relationship right is what separates a facilitator who runs good sessions from one who consistently produces results you can act on.

The skills are necessary. A facilitator who can't read the room, manage competing voices, or build enough trust for honest conversations will struggle regardless of what method they follow. Active listening, neutrality, the ability to lead a group through the uncomfortable middle of a decision — these are real competencies, and you want them.

But skills alone have no floor. When the room is difficult, when the politics are messy, when pressure is high — skill without structure depends entirely on judgment in the moment. Two facilitators with the same skill level will produce very different outcomes if one is running a proven method and the other is improvising.

A good method is what makes skill reliable. The method provides the architecture: the right people in the room, the right tools at each stage of the session, the right sequence of activities that moves a group from divergence to convergence to a concrete decision. The facilitator's skill is what makes the method land well. Together, they produce something predictable: a specific output, every time, regardless of how hard the room was.

So when you evaluate an AI Facilitator, look for both. The interpersonal skill to navigate a difficult room — and the structured method that tells you precisely what you will have when the session ends. Not in general terms. As a specific artifact.

A good AI Facilitator can run four methods, all designed specifically for the demands of AI adoption. Here is what each one is, who it is for, what it produces, and why it matters.

⚠️ A note on bias. My bias. The four methods described below are the ones we built and use at Design Sprint Academy. We designed them, tested them, and refined them over different sessions. We also train facilitators to run them inside large organisations. That hands-on experience is the basis for this recommendation — and it also means I am not a neutral observer. There are other facilitation methods and frameworks in the market. What I can tell you is what these four produce, why they work, and how they connect into a system. You can decide how to take my recommendation.

Two levels, four methods

One method runs at leadership level, with senior decision-makers in the room. Its job is to align the organisation on where AI should focus before any execution work begins.

Three methods run at execution level, with cross-functional Discovery Pods — the business owner, domain expert, technical lead, research or customer voice, and legal or compliance voice assembled around a specific challenge. A senior Decider is always present, but these sessions are built for the practitioners doing the work, not the executives setting strategy.

At leadership level

1. AI Opportunity Mapping for Executives

Most leadership teams arrive in the strategy room carrying different assumptions about what AI is actually for. One leader is focused on cost reduction. Another is thinking about customer experience. A third is worried about competitive pressure. A fourth is primarily concerned about governance and risk. None of them are wrong — but none of them are aligned either.

When those unresolved differences get passed downstream, they produce fragmented investment. Teams pursue different problems. Budgets split across initiatives that don't reinforce each other. The organisation ends up with AI activity but no AI traction.

AI Opportunity Mapping for Executives is the session that resolves this before it becomes expensive. The leadership team diverges on where AI could create the most value across the organisation — across functions, workflows, customer journeys, cost structures — and then converges on a shared direction. They also surface where the organisation is genuinely ready to act and where it isn't.

The output is a set of Big Idea Statements — one per strategic priority or business vertical that emerges from the session. Each statement is a bold, specific articulation of the AI-driven future the organisation is building toward in that area: a clear, single sentence that frames who it is for, what change it creates, and why it matters strategically. It is not a mission statement or a vision deck. It is a decision-making anchor.

What makes these statements valuable is not just their clarity — it is the alignment behind them. Every Big Idea Statement that comes out of this session has a senior leader who was in the room when it was written, who shaped it, and who owns it going forward. Each statement has a named sponsor. That sponsorship is what turns a strategic direction into an organisational commitment — and what gives every downstream team a clear test for the AI initiatives they are asked to evaluate: does this serve the Big Idea our sponsor is accountable for?

Why it matters for you: If your leadership team hasn't produced this statement explicitly, then the AI Facilitator — and every team they work with — is operating without a shared map. This session is how you prevent that.

At execution level

The following three methods all run with cross-functional Discovery Pods assembled around a specific challenge. A senior Decider is always in the room with the authority to make a build, iterate, or stop call — but the sessions are designed for the execution team, not leadership.

2. AI Problem Framing

Once the organisation knows where to focus, AI Problem Framing translates that strategic direction into a specific, executable AI use case. This is the session where the abstract becomes concrete.

A cross-functional team evaluates the candidate AI opportunities on the table. Each one is assessed across four dimensions: is it desirable (do users actually want this?), feasible (can the organisation build it given current data and infrastructure?), viable (will it create real business value?), and pragmatic (can it be done within real constraints?). This is not a brainstorming session — every candidate is assessed, ranked, and either advanced or parked.

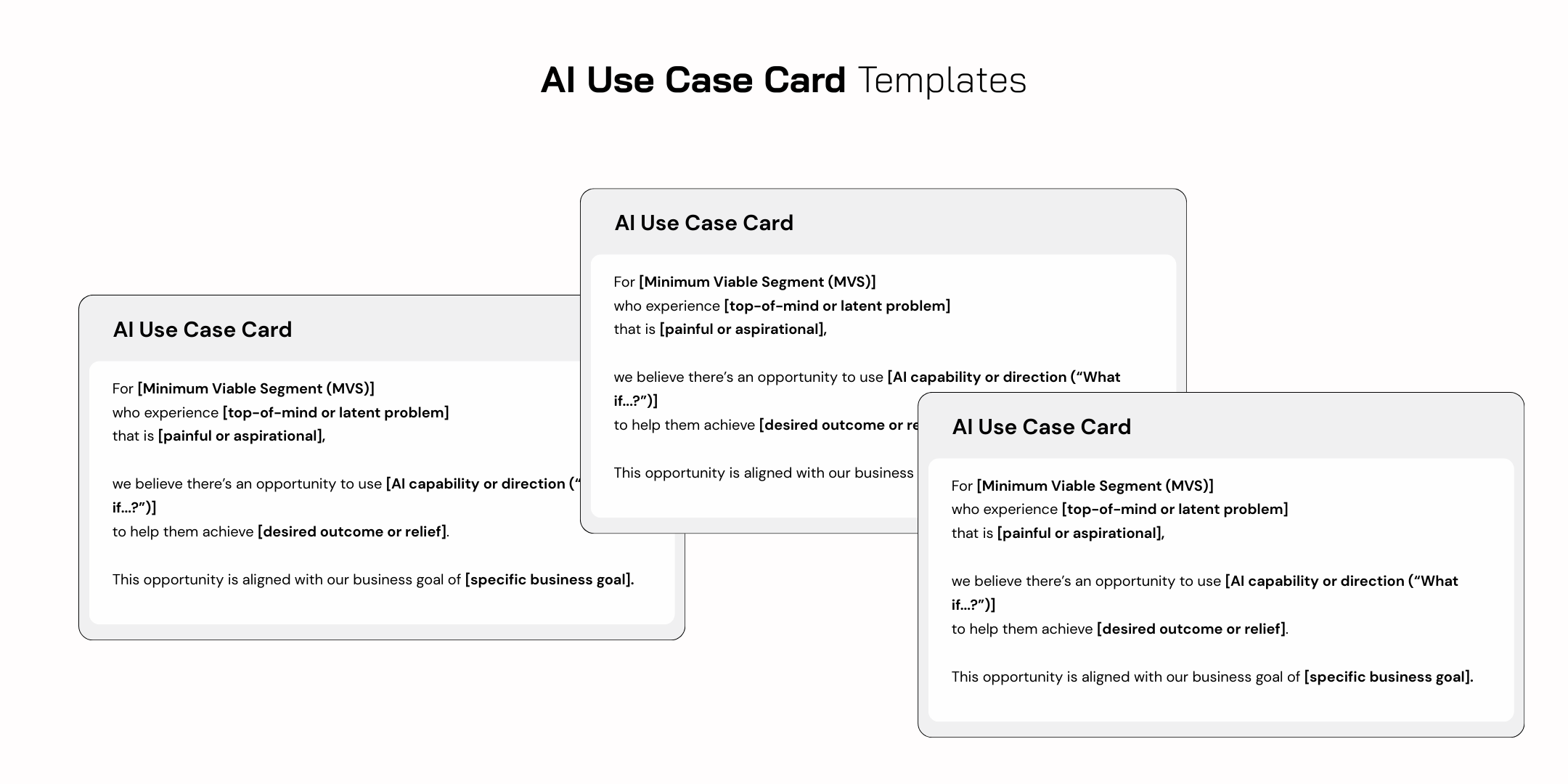

The output is an AI Use Case Card: a single, structured artifact that captures the user and their context, the specific problem being solved, exactly where and how AI adds value, the expected business impact, and the risks and open assumptions that need to be resolved before building begins. It is a decision — the one use case the team has collectively agreed is worth pursuing next, with the evidence and reasoning documented so that any senior stakeholder can review and act on it without needing to reconstruct the conversation.

Why it matters for you: Most AI pilots fail not because the technology didn't work but because the use case was never properly evaluated before engineering resources were committed. The AI Use Case Card is the filter that prevents that.

3. AI Workflow Sprint

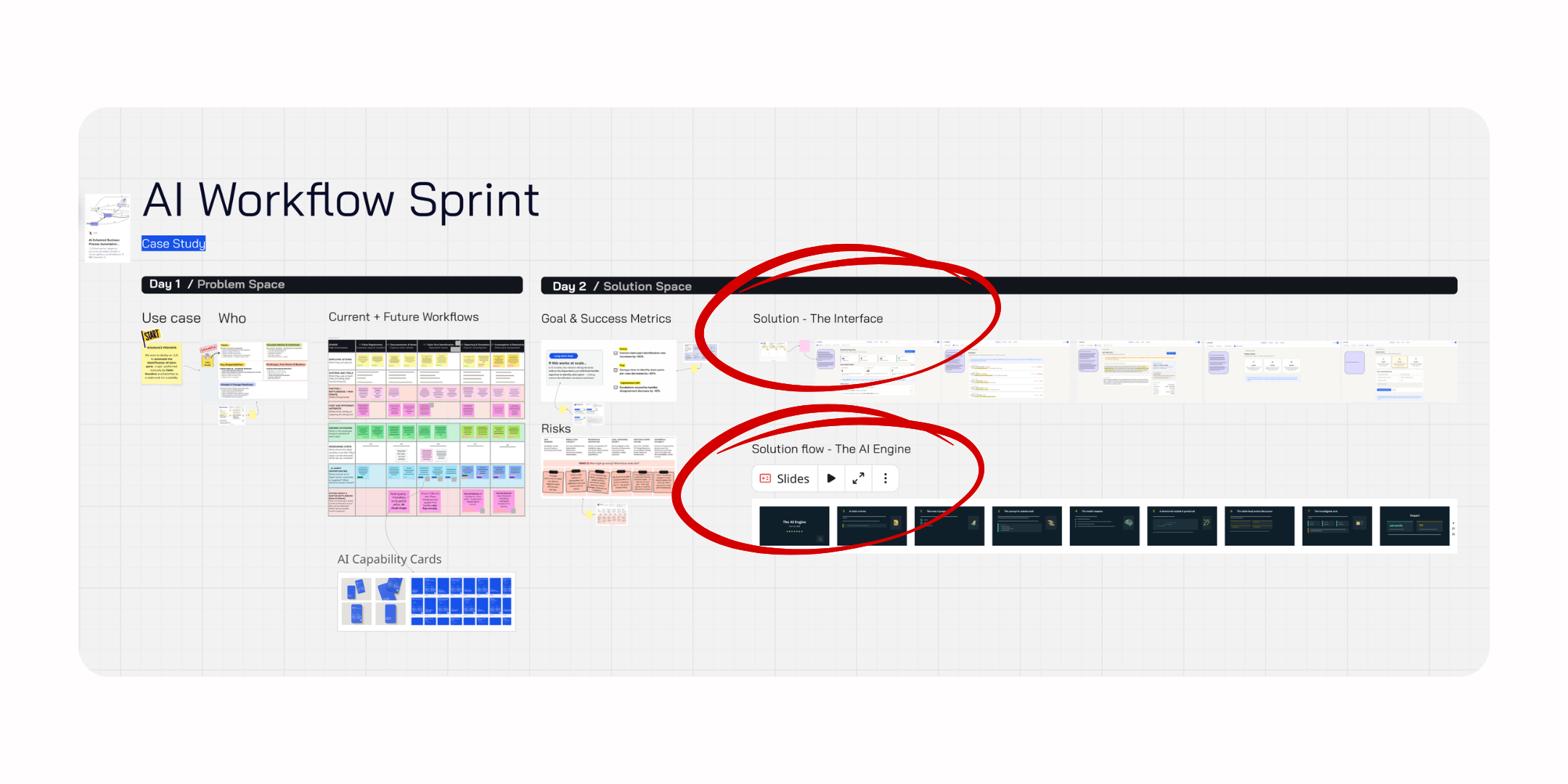

The AI Workflow Sprint runs over four days, but not everyone is in the room for all of them. The Discovery Pod — the cross-functional team of business owner, domain expert, technical lead, customer voice, and compliance voice — is present for the first two days only. That is where the discovery and design work happens, and it is the only commitment you are asking of those people.

Day 1 maps the current employee workflow in detail — every step, every decision point, every place where work slows down or breaks — and redesigns it to identify where AI assistance creates real value. The team understands the human work before designing the AI support for it. Day 2 defines a long-term goal and success metrics, surfaces the risks that could prevent success at scale, and converges on a solution concept and prototype blueprint.

After Day 2, the Discovery Pod hands off. Day 3 belongs to a smaller AI Build Trio — typically an AI engineer, a designer, and a technical lead — who take the blueprint and build a working AI agent MVP. Realistic enough to test, not polished enough to ship.

Day 4 is led by a dedicated Interviewer who runs five structured sessions with real employees, observing how they interact with the prototype. The insights are synthesised and brought back to the Decider for a clear sprint decision: scale, iterate, or stop.

The output is an AI agent MVP operating inside a redesigned employee workflow — a tested blueprint that gives your engineering team a concrete starting point and your leadership team a grounded decision, made before a single line of production code is written.

Why it matters for you: The AI Workflow Sprint compresses what would normally take six months of pilot work into 2-4 days. Ideas that don't survive validation are stopped with evidence. The ones that do survive have a tested prototype and real user data behind them.

4. AI Design Sprint

Where the AI Workflow Sprint focuses on employee-facing workflows, the AI Design Sprint is designed for customer-facing AI products and services. It is the Design Sprint methodology refined and adapted specifically for AI-native solutions — built for multi-functional teams with different vocabularies, tighter ethical and compliance constraints, and the particular challenge of validating AI-powered experiences with real users before any model training or development begins.

Like the AI Workflow Sprint, the Discovery Pod is only in the room for the first two days. Day 1 defines the AI challenge, maps the customer journey, surfaces assumptions about what AI could do, and sets the sprint target. Day 2 generates solution ideas, converges on the strongest AI-powered direction, and produces a prototype blueprint.

After Day 2, the Discovery Pod hands off. Day 3 belongs to a single Builder — someone who uses AI tools to build functional prototypes end to end. Landing pages, apps, dashboards, full interfaces — what used to require a team of engineers and designers can now be built by one person in a day. The Builder takes the blueprint from Day 2 and produces a working prototype realistic enough to generate genuine user reactions to both the experience and the AI behaviour.

Day 4 is led by a dedicated Interviewer who runs five structured sessions with real customers. The insights are synthesised and brought back to the Decider for a clear next step.

The output is a new AI-powered value proposition in the shape of a functional prototype — interactive enough that real users can navigate it and surface honest reactions: moments of trust, confusion, hesitation, or delight that no amount of internal debate would produce. Alongside the prototype, the team produces five documented user interviews and synthesised insights that tell you, with evidence, whether the direction is worth building — and if so, what to change before you do.

Why it matters for you: AI-powered products that don't work for the people they're designed for are expensive to fix after launch and impossible to recover from if trust is lost early. The AI Design Sprint is how you find out what works before you build it.

How the four methods connect

The four methods are not a menu. They are a progression. Each one produces an output the next one depends on.

AI Opportunity Mapping for Executives produces a set of Big Idea Statements: where should AI focus across the organisation, and who is accountable for each direction?

With that direction established, three execution-level methods take over.

AI Problem Framing produces an AI Use Case Card: of all the AI opportunities on the table, which specific one is worth pursuing next — and why?

AI Workflow Sprint produces an AI agent MVP inside a redesigned employee workflow: does this actually work for the employees it’s designed for, and should the organisation build it?

AI Design Sprint produces a functional prototype of an AI-powered product or service: does this create real value for customers — and is it worth committing to development?

An organisation that runs these in sequence makes decisions at the right level, with the right people, at the right moment. An organisation that skips steps ends up with the wrong problem well-solved, or the right problem poorly scoped, or a prototype built on assumptions nobody tested.

Now go back to the question this article started with: how do you tell a good AI Facilitator from one who will produce good meetings but no real outcomes?

You look for both things together. The skill to hold a difficult room, manage conflict, build enough trust for honest conversations, and lead a group through the uncomfortable middle of a real decision. And the structured method that tells you precisely what the session will produce — not in general terms, but as a specific artifact with a named owner.

Skill without method is unreliable. Method without skill is mechanical. Together, they produce something an organisation can actually use: a decision, grounded in evidence, made by the right people, at the right moment.

That is the answer you want to hear when you ask what methods they run. A system — and the skill to run it well.

.png)