Turner Construction — How to built an AI Operating Model that compounds

The starting point: 11,000 employees and decades of knowledge locked in people's heads

Turner Construction is one of the largest general contractors in the United States. At the point they engaged Design Sprint Academy, they had 11,000 employees, hundreds of active job sites running simultaneously, and decades of operational knowledge embedded in their people — not in systems, not in processes, but in the heads of experienced project managers, site supervisors, and directors who had solved the same class of problems hundreds of times.

Leadership knew AI was part of the answer to making that knowledge accessible and scalable. The challenge wasn't ambition. Turner's leadership had already committed to building AI capability at scale.

The challenge was the sequence:

Where do you start when the problem space is that large, the workforce is that distributed, and the pressure to show results is real?

This is the question most organizations in Turner's position get wrong. They start with tools. Or they appoint a team, give them a mandate, and hope something compounds.

Turner did something different. They started with problem framing.

What does an AI Operating Model look like in practice?

The engagement with Design Sprint Academy followed three distinct phases. Each one built on the previous. Together, they produced something that looks very different from a typical AI rollout: an operating model Turner now runs independently, without DSA involved.

Phase 1: Aligning leadership on where AI should focus

Before any practical work began, DSA ran an AI Problem Framing session with Turner's Directors and VPs. The goal wasn't to generate AI use cases. The goal was to get leadership aligned on which problems were actually worth solving — and which ones weren't.

This distinction matters more than it might appear. Most AI initiatives arrive at the practitioner level already pre-loaded with solutions leadership has decided on upstairs. The use cases that get prioritized often reflect what's technically visible or politically convenient, rather than where genuine operational value is lost every day. Turner's leadership wanted to avoid that pattern.

The AI Problem Framing session brought the right people into the same room with a structured process for evaluating AI opportunities against what actually matters: where is workflow genuinely breaking today? What measurable outcome changes if this is fixed? What data exists, and is it usable? What are the governance constraints that need to be defined before anyone starts building?

The output was a prioritized set of AI opportunities — not a roadmap, not a strategy document, but a set of specific, defensible use cases with the reasoning behind each one documented. That output became the foundation for everything that followed.

Phase 2: Running AI Workflow Sprints across three teams

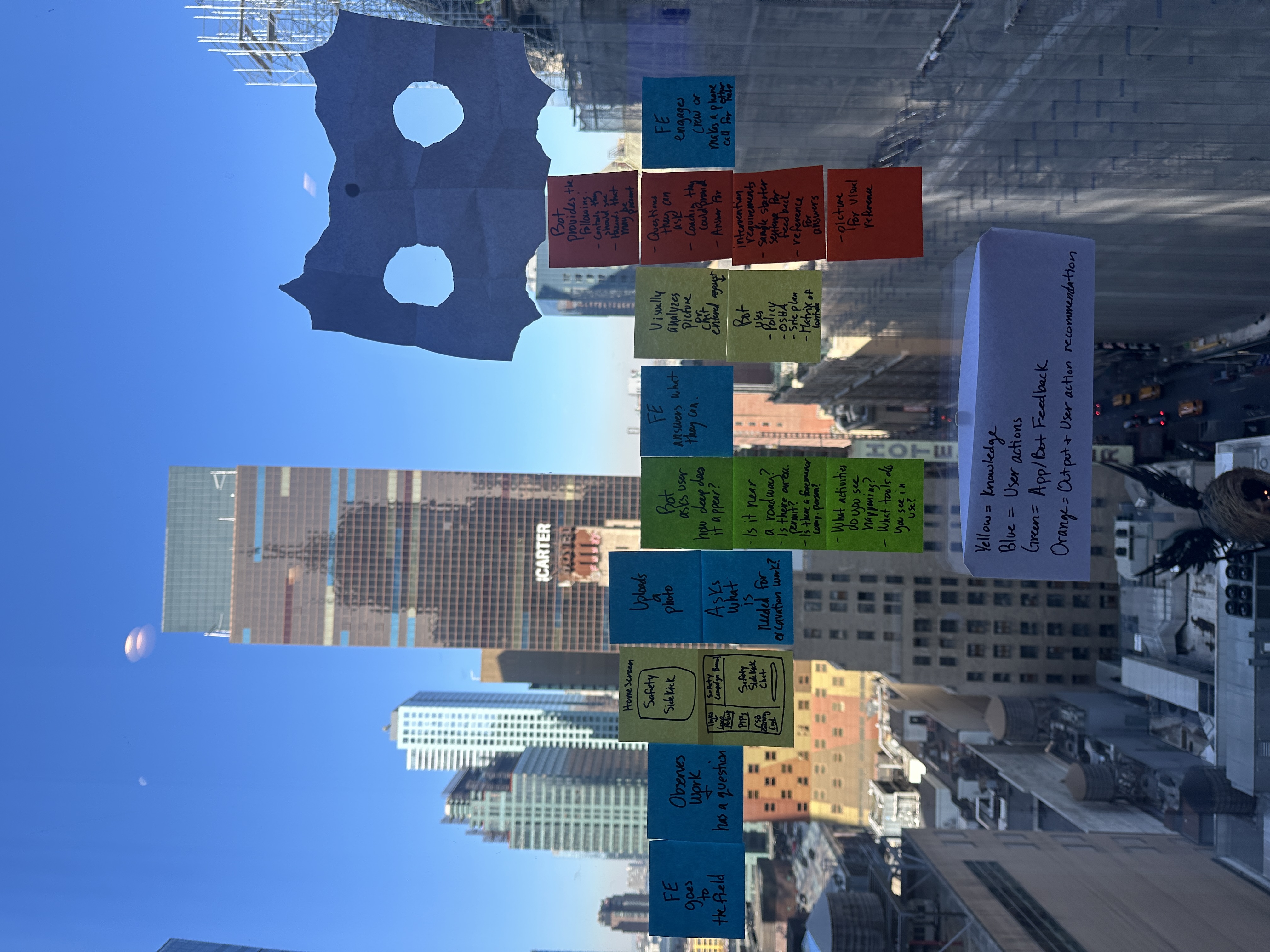

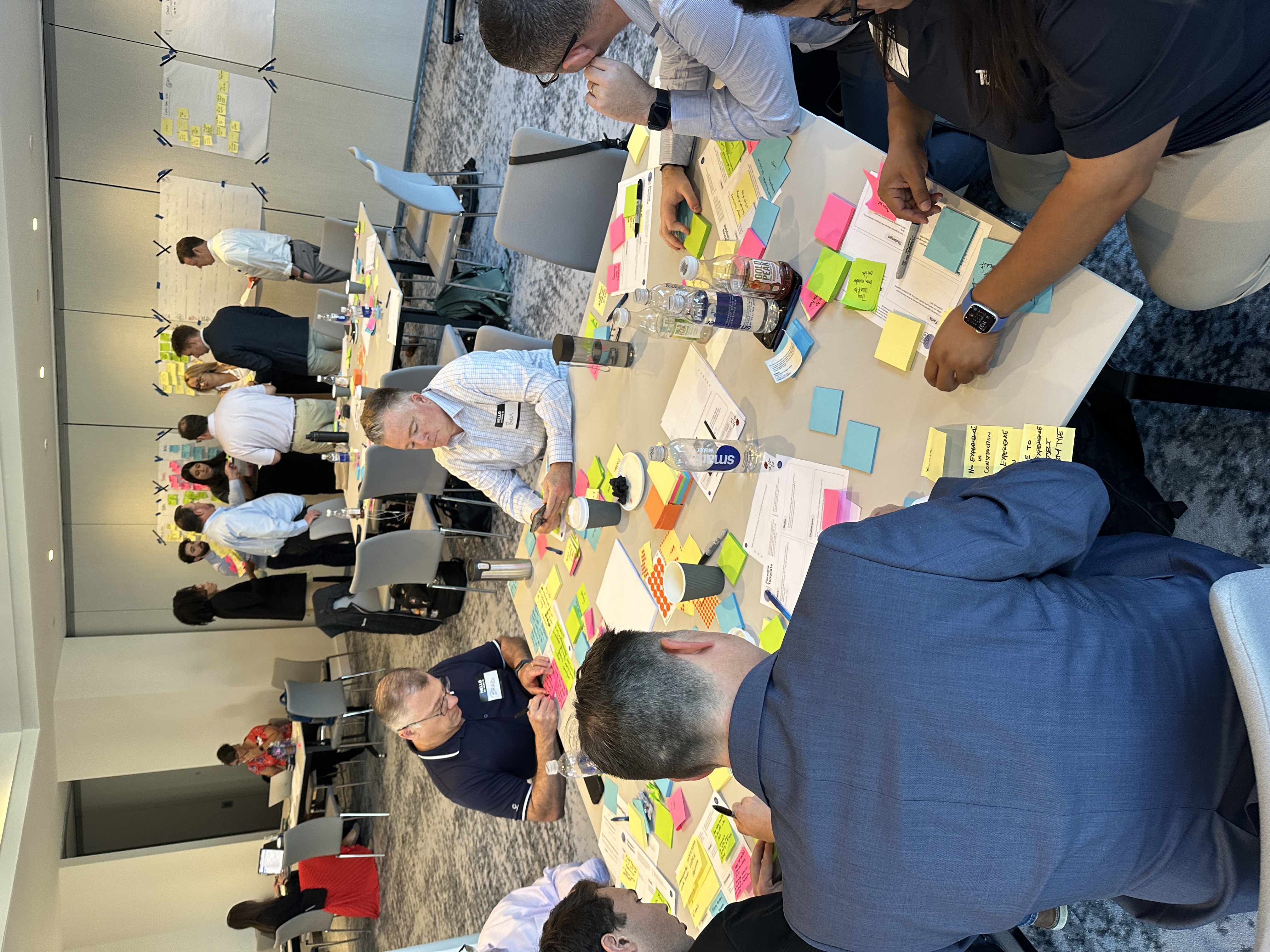

With the priority areas defined, DSA facilitated AI Workflow Sprints across three teams. Each sprint assembled a cross-functional Discovery Pod — domain experts, technical leads, data engineers, and the people who actually do the work that AI would be redesigning — and ran them through a structured four-day process.

The first two days belonged to the Discovery Pod: mapping the current employee workflow, identifying where time and decisions were lost, redesigning the workflow with AI integrated, and converging on a specific solution concept. Day three was a build day — a working AI agent prototype built against the redesigned workflow. On the same day we also did the testing phase — structured sessions with real Turner employees, synthesized findings, and a clear Decider decision: scale, iterate, or stop.

This is the mechanism that produces results at pace. Not a long discovery project. Not a proof-of-concept that runs for six months and surfaces its real constraints at month five. A structured, contained session that produces a validated prototype and a clear decision in four days — with the evidence to defend that decision at leadership level.

Across three teams, Turner ran three full sprint cycles. Each one produced an AI application tested against real workflows, with documented evidence of where value was created and where constraints needed resolution before production investment.

Phase 3: Training Turner's own people to run the methodology

The third phase is what separates an AI Lab from a series of workshops. DSA trained Turner's internal people to run the methodology independently — to facilitate AI Problem Framing sessions, to assemble and orchestrate Discovery Pods, and to run AI Workflow Sprints across teams without external support.

This is the phase most organizations skip. They run successful sessions with an external partner, see the results, and then assume the capability will transfer naturally. It doesn't. Facilitation looks easier than it is from the outside. The discipline that makes a Discovery Pod produce a clean decision rather than a good conversation — the session design, the sequencing, the management of group dynamics, the protection against the failure modes that derail most AI discovery work (status battles and AI hype) — is a trained skill. It doesn't transfer by observation.

Turner invested in that training. By the end of the engagement, their internal AI Facilitators were running the system. DSA stepped back.

What did Turner's AI Lab produce?

The results from Turner's AI Lab are specific enough to be worth naming directly.

400+ custom AI applications built internally, across business units, by Turner's own people — not outsourced, not built by a separate technical team.

70,000+ hours of annual capacity unlocked — operational time recovered across the business through AI-assisted workflows. That number is not a projection. It is the aggregate of validated outcomes from applications that survived the structured evaluation process and reached production.

An AI Lab operating model that runs without DSA today. Turner's internal AI Facilitators are assembling Discovery Pods, running problem framing sessions, and executing sprint cycles independently. The methodology is inside the organization. The external dependency is gone.

These three outcomes are not independent. They compound because they're connected. The 400+ applications were produced through a system, not through scattered experimentation. The 70,000 hours of capacity were recovered because the use cases that reached production had been validated against real workflows before anyone committed to building them. And the operating model is running independently because Turner invested in training the people who run it, not just in running sessions.

Why most AI Initiatives don't get the same results

The Turner outcome is specific, but the pattern is replicable — because the conditions that produced it can be deliberately built.

Most large organizations are two or more years into AI investment with a portfolio that looks like a collection of point solutions, scattered pilots, and training programs that haven't compounded into anything defensible at board level. The pressure is real. Clients are asking, competitors are shipping, and leadership is asking the same question everywhere: we've been investing, so why isn't this scaling?

The answer is almost never the technology.

It is the absence of a structured system for deciding what's worth building before anyone commits to building it.

Without that system, AI investment fragments — each team pursuing its own direction, nobody filtering for what creates genuine value, and the question at ELT level still unanswered twelve months later.

Turner built that system deliberately, in sequence: leadership alignment first, practitioner validation second, internal capability third. Each phase depended on the previous one. Each one compounded.

A structured AI operating model is an exploration engine — a repeatable system that runs parallel to the core business, answering one question continuously: what is worth building with AI, and what is not, before serious resources are committed.

"A healthy AI Lab stops more ideas than it ships." That's how the system produces signal: by applying a filter rigorous enough to distinguish AI opportunities that create real value from ones that look good in a slide deck and fall apart in a real workflow. The ideas that don't survive are not failures. They are decisions that would have been six-month pilots if there had been no filter at all.

Turner's 400+ applications are the ideas that made it through. The ones that didn't make it through saved the organization the cost of finding out the hard way.

What can other organizations take from Turner's experience?

The Turner case offers several principles worth carrying into any enterprise AI operating model design.

Start with leadership alignment, not practitioner enthusiasm.

The AI use cases that compound are the ones leadership has genuinely prioritized against business outcomes — not the ones a motivated team championed from the bottom up. AI Problem Framing at the leadership level is what creates the mandate the rest of the system needs to operate with authority.

Validate before you build.

The AI Workflow Sprint's four-day cycle is designed to produce a tested prototype and a clear decision before any engineering resources are committed. The organizations that build the most valuable AI applications are not the ones that build fastest — they're the ones that stop the wrong ideas early enough that their engineering capacity is focused only on what will actually work.

Internal capability is the only durable outcome.

The organizations that will lead in the AI era are not the ones that run the most external workshops. They are the ones that have trained enough internal AI Facilitators to run structured decision-making at scale, in parallel, across business units — without waiting for an external partner to be available. Turner built that. That is why the Lab runs without DSA today.

The operating model compounds; individual sessions don't.

One Discovery Pod produces a decision. Three pods across different business units produce a pattern. Twelve pods over twelve months produce something an organization can point to: a documented record of what was evaluated, what was stopped, what was validated, and what is now in production — with the reasoning behind every call. That record is what an ELT review requires. Individual sessions don't produce it.

How does an AI Lab Operating Model work?

An AI Lab operating model is a structured, repeatable system for evaluating AI opportunities, validating the ones worth pursuing, and building internal capability to do this independently at scale. It is built on three components: AI Discovery Pods (temporary, cross-functional teams assembled around specific AI challenges), AI Facilitators (trained process specialists who run structured decision-making sessions), and a structured workshop cadence — AI Problem Framing, AI Workflow Sprint, and AI Design Sprint, each matched to a different stage of AI decision-making.

The system runs parallel to the core business. It does not require reorganization, new reporting lines, or a change to how the organization operates day to day. Teams participate in pods for a defined session, then return to their normal roles.