What is the AI Workflow Sprint?

The Unicorn Problem in AI Adoption

Many companies are trying to rebuild parts of their operations with AI.

Operations teams want tasks handled faster. Product teams want AI features. Executives want measurable gains in output.

So the search begins for the person who can connect all of it.

Someone who understands how the business runs, how work moves across teams, what AI systems can actually do, the limits of the technology, the regulatory constraints, and the business goals behind it.

That person is hard to find.

People who know AI rarely know the details of operational workflows. People who run those workflows usually do not know what AI systems can realistically deliver.

The overlap is small.

So organizations try to hire it. A new leadership role appears. Consultants arrive. Job descriptions ask for someone who can bridge business, technology, and regulation.

While that search continues, the organization doesn't wait.

While everyone assumes the answer is the right hire (🦄 unicorn) something else starts happening...

While the Search Continues, AI Spreads

AI adoption rarely begins with a master plan.

It starts with small experiments.

Someone tests a new AI tool. A team builds an internal assistant. Another group connects a model to a reporting workflow. A product manager links an AI model to an internal system.

At first, it looks like progress.

But after a while, leaders begin asking simple questions:

- How many AI tools are running across the company?

- Which teams are using external models?

- Is sensitive data leaving internal systems?

- What are we spending on all of this?

Few organizations can answer clearly.

Experiments spread faster than oversight.

Soon AI appears across dozens of workflows, often outside formal architecture decisions.

Eventually someone asks a harder question in a leadership meeting:

Who is responsible for this?

At that moment the tone changes. Exploration turns into containment. Security teams step in. Legal reviews start. Governance committees form.

Progress slows. Sometimes it stops.

Why? Often the answer is: because the organization never designed how AI decisions should be made.

The Real Constraint

The difficulty is not in the build phase. It is in coordination.

AI sits between two groups that rarely work closely enough.

Technical teams understand models, infrastructure, data systems, and risk.

Business teams understand the workflows, the pressure points, the compliance realities, and the outcomes that matter.

Both sides are needed.

But most organizations have no reliable way to bring them together around the same problem.

Without that structure, discussions between IT and business drift into debates rather than decisions.

Designing the Right Conversation

Consider a typical meeting about AI adoption.

An AI engineer explains model capabilities. A workflow owner describes operational bottlenecks. Legal raises compliance questions. A business leader asks about impact and cost.

Each person sees a different part of the system.

But they are not working through the problem in the same order.

The result is predictable: ideas appear, constraints surface, but decisions remain unclear.

Most organizations have the right expertise... but they lack a format for turning that expertise into a shared decision.

The AI Workflow Sprint

The problem isn't that organizations lack the right people. It's that they have no format for turning the people they have into a shared decision. The AI Workflow Sprint was built to be that format.

Instead of searching for a single person who understands everything, the sprint brings the necessary perspectives together for a short, focused process.

The right people. A clear sequence of questions. Artifacts that capture decisions as the work progresses.

Over four days, a cross‑functional team (we call it - AI Discovery Pod) works through a single workflow. They map the work, redesign a step using AI, build a prototype, and test it with employees.

The output is a concrete solution that an engineering team can choose to build. By the end of the sprint the group has:

- a redesigned workflow

- a validated AI use case

- a working prototype

- agreement on what to build next

How the Sprint Works

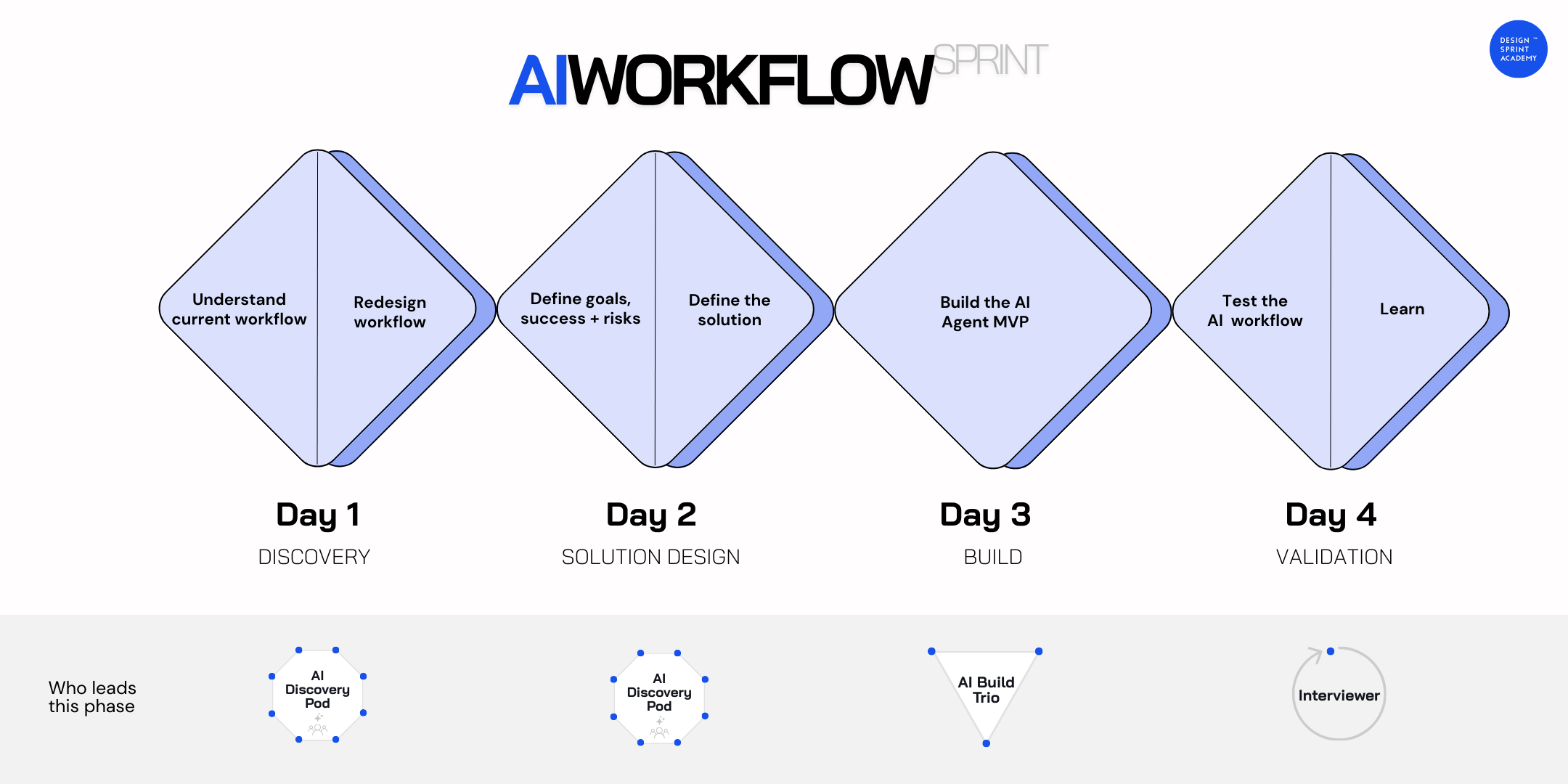

The process unfolds across four focused stages.

This structure moves a team from idea to tested concept in a few days.

Day 1 — Understanding the Workflow

The sprint begins by studying the work itself.

Before discussing AI, the team maps the entire workflow step by step — not as it appears in documentation, but as it actually operates today. They locate decision points, delays, moments where expertise is required, and places where errors appear.

This exercise consistently reveals hidden complexity. Steps that belong to nobody. Handoffs that rely on one person's institutional knowledge. Decisions made on instinct because the logic was never written down. Bottlenecks everyone accepted as permanent that are actually artifacts of an old system decision.

Only after that map exists does the group examine where AI creates genuine leverage — what should be assisted, what should be automated, and what should remain a human decision. The day ends with a Sprint Focus: the specific AI opportunity the entire build and test phase will be designed around.

Day 2 — Designing the AI Workflow

Once the team has a clear Sprint Focus, they define what success looks like.

They set a long‑term goal, measurable indicators of success, and the main risks that could block progress.

These measures often focus on operational outcomes such as accuracy, processing time, or capacity.

The team also reviews practical constraints: data readiness, technical feasibility, compliance rules, and employee adoption.

With those boundaries clear, participants sketch how people and AI will interact.

- What does the employee see?

- What does the AI produce?

- What decision does the human make?

The strongest ideas are combined into a storyboard that describes the future workflow step by step.

Day 3 — Building the AI Agent MVP

The third day shifts from planning to building.

A smaller group develops a working prototype. This usually includes an AI engineer, a designer, and a subject‑matter expert - we call this the Build Trio.

The prototype has three parts:

- AI logic — prompts, tools, and reasoning steps.

- Workflow orchestration — the sequence that connects AI actions.

- Interface — the place where employees interact with the system.

The result is an AI Agent MVP - a prototype realistic enough for employees to experience the redesigned workflow.

Day 4 — Testing With Real Users

Before any wider rollout, the prototype is tested with employees who perform the workflow.

Participants walk through real tasks while the team observes where confusion appears, where trust drops, and where the system improves the work.

These sessions often surface issues that internal discussions miss.

Sometimes the prototype confirms the opportunity.

Sometimes it exposes barriers that must be addressed first.

Either result gives the organization clear direction.

For a more detailed explanation of what the AI workflow sprint is and when is needed watch our latest webinar:

When to Run the AI Workflow Sprint — and When Not To

✅ Run it when:

- You need to move fast, demonstrate ROI, and unblock decisions that have been stalling

- Stakeholders are pushing to scale AI workflows that work in isolation — and you can already see how they will break at integration before resources are lost

- A specific internal workflow has been identified and a validated AI use case exists — ideally from an AI Problem Framing workshop

- The right cross-functional people can be assembled: workflow knowledge, AI capability, and a Decider with authority

- The data and knowledge the AI would need exists, is accessible, and is allowed by regulation

- The team is genuinely open to a Scale / Iterate / Stop outcome — not looking to confirm a decision already made

❌ Don't run it when:

- No specific workflow problem or use case has been defined yet — run AI Problem Framing first

- The problem is customer-facing — run an AI Design Sprint instead

- Leadership has already committed to a specific solution — if the outcome is not open to a test-and-decide result, the sprint becomes a formality

- The real problem is organizational or architectural — if the issue is governance, ownership, or enterprise architecture, a workflow sprint is the wrong intervention

- The room is not cross-functional enough — if you don't have workflow knowledge, AI capability, and a Decider in the room, you won't reach a strong decision

- The data or knowledge base doesn't exist — if inputs are not digitized, accessible, or allowed by regulation, you cannot design a believable AI agent around them

- The workflow is too broad — if the challenge is something like "improve sales with AI" without a specific employee segment and use case, the team will stay abstract and fail to converge

What Comes Before an AI Workflow Sprint

The AI Workflow Sprint requires a validated AI use case as its starting point — specifically one that points at an internal workflow. Without that, the team enters Day 1 without alignment on what they're redesigning or why.

The typical prerequisite is an AI Problem Framing workshop, where a cross-functional team has already identified, evaluated, and prioritized the use case. The AI Use Case Card produced in that session becomes the brief for the sprint.

If no prior use case work has been done, that's the right first step before running a Workflow Sprint.

How the AI Workflow Sprint Differs from an AI Design Sprint

Both follow a four-day structure, but they serve different purposes and different users.

The AI Workflow Sprint is focused on internal workflows — processes that employees perform. The team redesigns their workflow around AI capability, builds a functional AI Agent MVP, and tests it with the people who do that work every day. The question being answered is: will employees trust and adopt this?

The AI Design Sprint is focused on customer-facing products and services. The team prototypes a new AI-powered concept and tests it with external users. The question being answered is: will customers value this enough to change their behavior?

Same structure. Different user. Different risk surface. The type of AI use case — internal or customer-facing — determines which sprint to run.

From Experiments to a Repeatable System

For leaders responsible for AI adoption, the main challenge is not experimentation. It is consistency.

A single pilot does not change how a company works.

What leaders need is a process that repeatedly produces useful AI initiatives.

The AI Workflow Sprint provides that structure.

Each sprint delivers a redesigned workflow, a tested use case, a working prototype, and a defined next step.

When organizations run these sprints across many workflows, AI stops appearing as scattered experiments. It becomes a regular method for improving how work gets done.