You don't need more AI Pilots. You need a system for deciding which ones to run. Enter the AI Lab

"AI Lab" is a term that means different things depending on where you work. At Anthropic or DeepMind, it describes a research unit focused on advancing what AI models can do — training new architectures, publishing findings, pushing capability frontiers. At OpenAI, it's where frontier models get built. These are important organizations doing foundational work.

The AI Lab in this article is something else entirely. It's not a model research unit. It's not a center of excellence. Not another governance layer. It's a decision system: a structured, repeatable way for cross-functional teams to decide which AI bets are worth building, which should be killed early, and which deserve real engineering time.

That's the distinction to get clear on first.

The difference between an AI Research Lab and an Enterprise AI Lab

Anthropic, OpenAI, and DeepMind are answering one question: what can AI become? Their work is foundational. They train models, advance architectures, push the limits, and publish research that eventually becomes the infrastructure everyone else builds on.

A corporate innovation lab answers a different question: what new businesses should we build that don't exist yet? Think Lufthansa Innovation Hub or Bosch Business Innovations — separate units with long timelines, deliberately insulated from the parent company's day-to-day.

Both are legitimate. Neither is what most organizations need right now.

What most organizations need is an answer to a third question:

Which AI opportunities are worth pursuing — and how do we decide that before we commit serious resources to finding out the hard way?

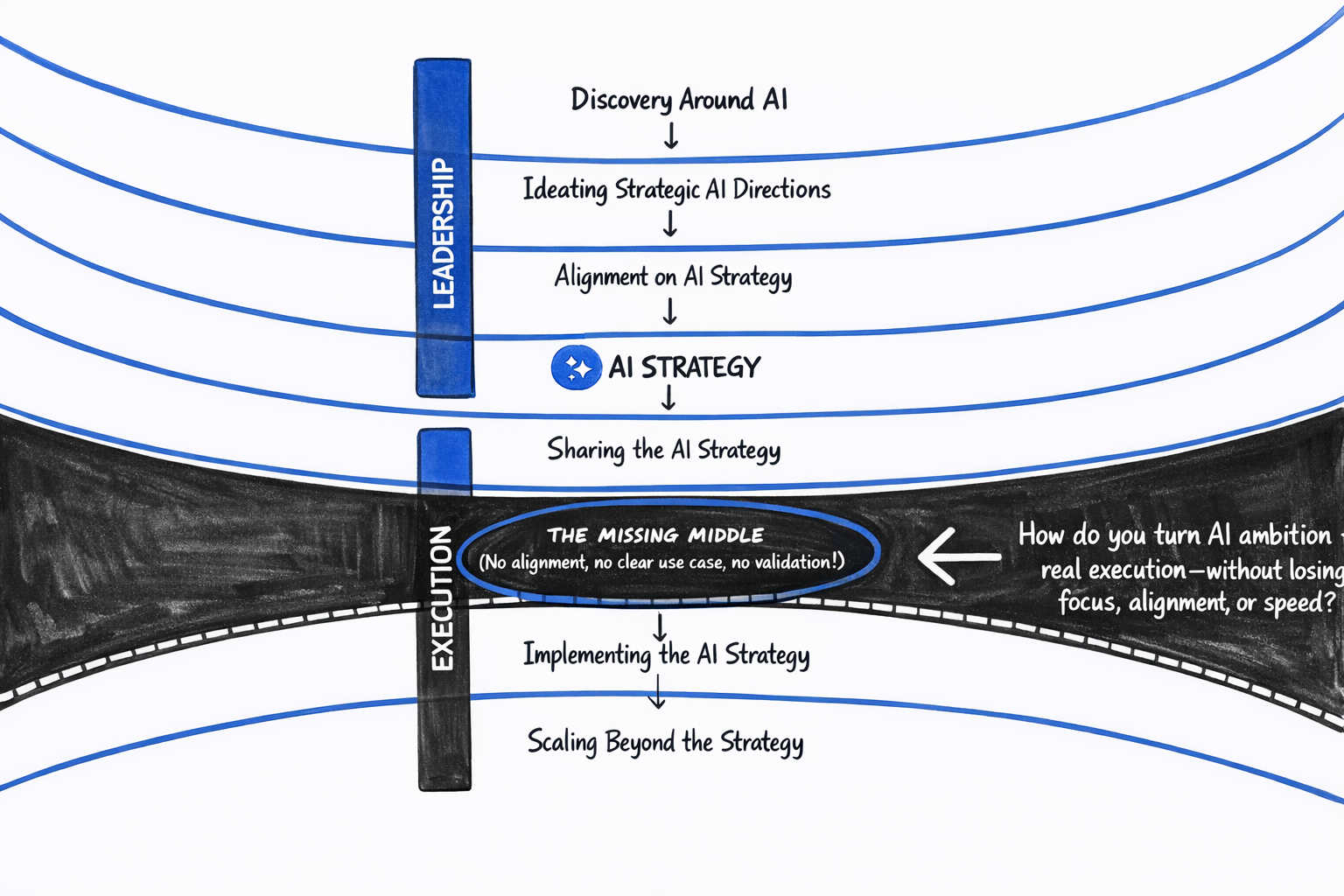

This is not an AI model research problem. It is not a horizon-three innovation problem. It is a decision-making problem. And it lives in the middle of the enterprise — between the AI strategy most organizations already have and the engineering teams already trying to build.

The AI Lab fills that middle. It does not push model capabilities. It uses the capabilities that already exist and asks a sharper set of questions: should we build this, for whom, measured how, constrained by what?

The problem the AI Lab is solving

Most organizations that have been investing in AI for one to two years arrive at roughly the same place. They've bought tools. Run AI foundations trainings. People can prompt. Individuals — and a few teams — found real shortcuts that make their day-to-day work better. A handful of pilots shipped.

And it still doesn't add up to anything the business can measure, repeat, or build on.

There's activity. There's energy. There's no way to connect the dots. The wins don't stack.

In most cases, the issue isn't the tech. It's the lack of a clear way to decide what to do with it — and what to stop doing. No consistent method to pick the few use cases worth real investment, test them fast, and kill weak ideas before they turn into six-month pilots that could have been resolved in days.

The AI Lab is that missing system. It doesn't replace engineering teams, AI tools, or data infrastructure. It sits upstream of all of it: the mechanism that decides what's worth building before anyone starts building.

How the AI Lab works: from Problem Framing to Scale / Iterate / Stop

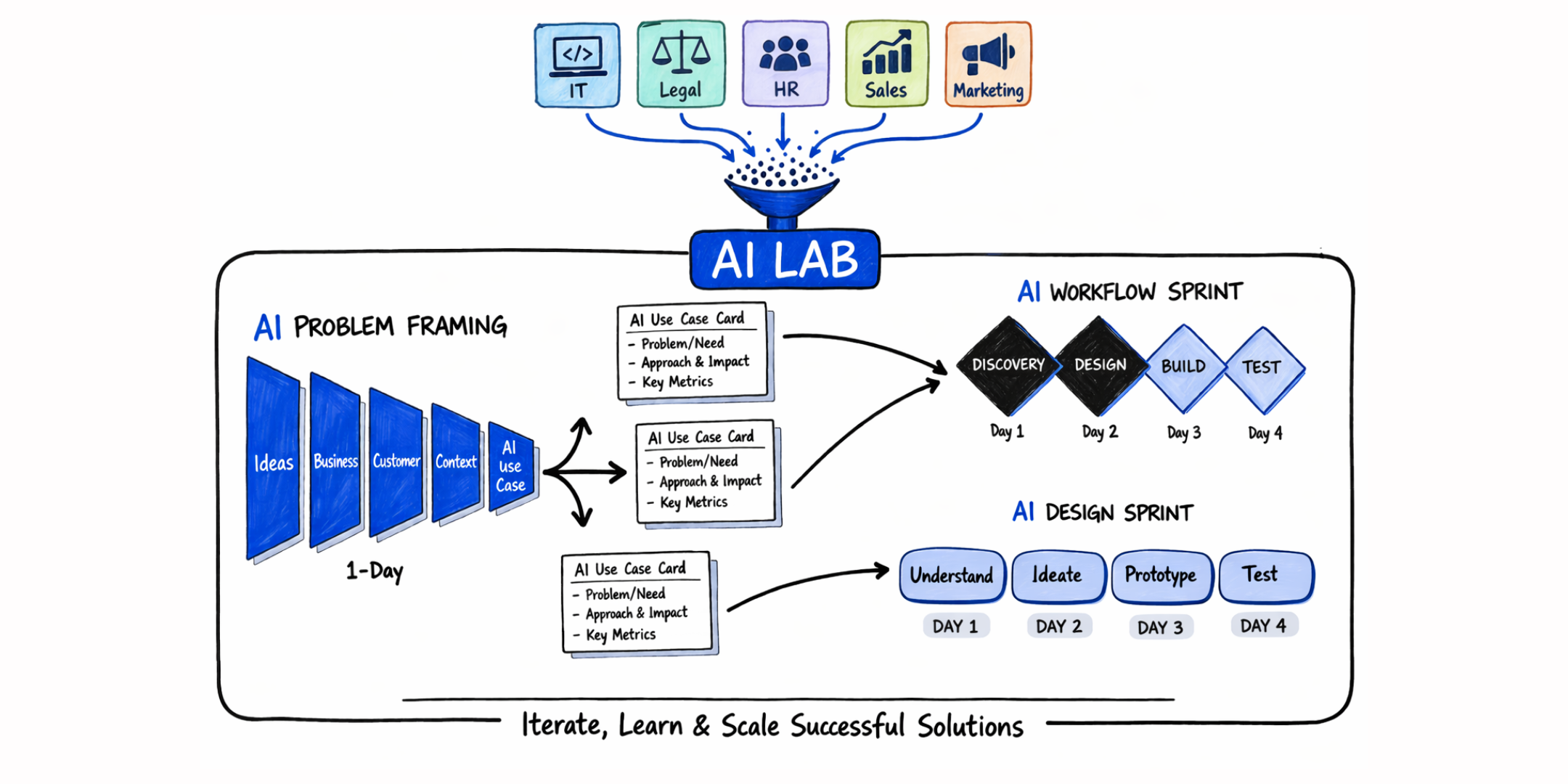

An AI Lab is a series of workshops. That's it. The difference is what they produce: clear choices and concrete outputs. The first workshop prioritizes AI use cases. The next workshops shape solutions and test them.

Every Lab starts with AI Problem Framing: a one-day workshop that turns a vague leadership mandate into a short list of specific use cases. A cross-functional team (the AI Discovery Pod) — usually six to eight people, including the business owner, the domain expert who knows where the work breaks, a technical lead, and Legal or Compliance from day one — works through a set process.

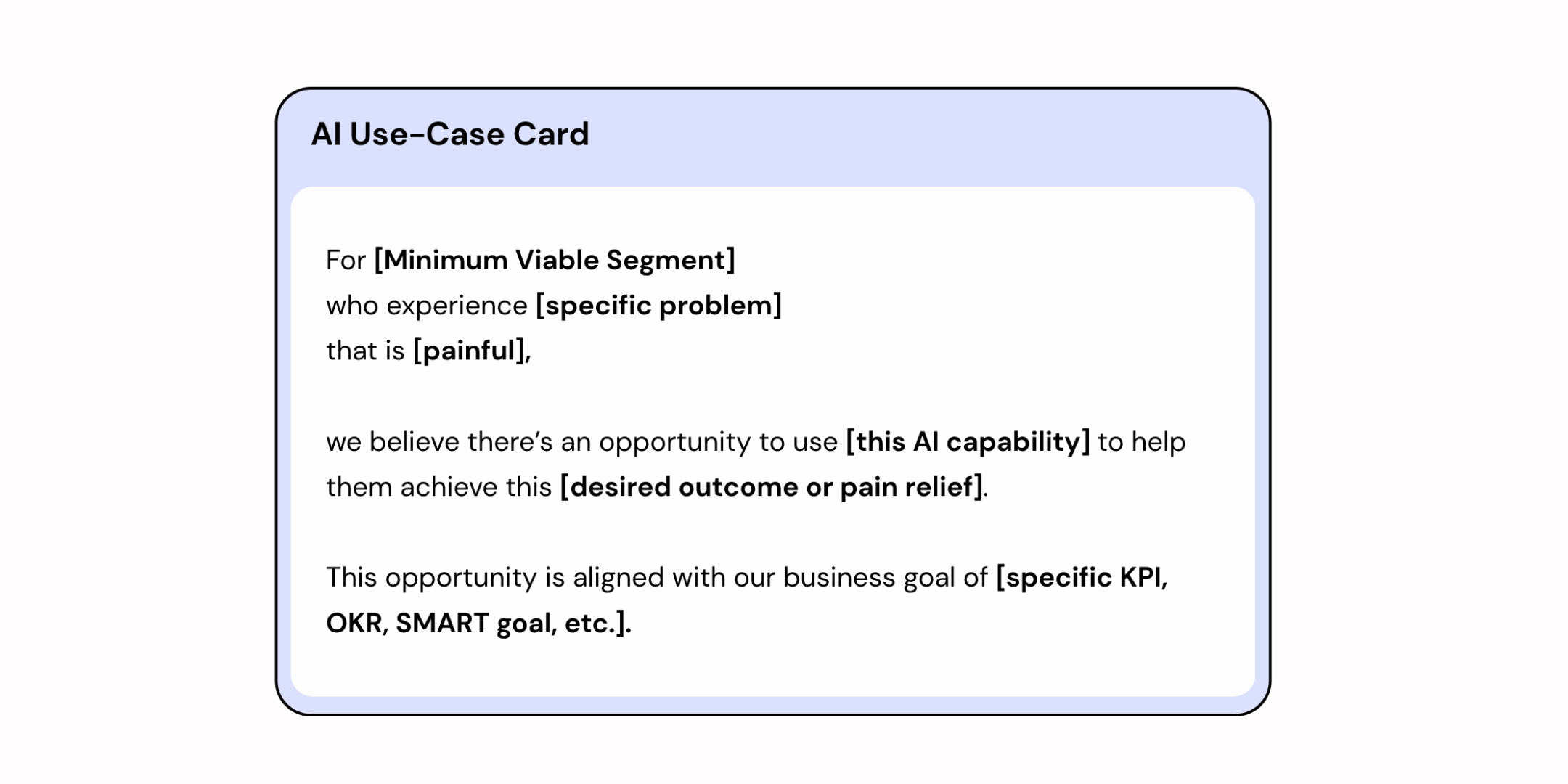

The goal isn't "use AI in procurement." It's: which workflow, for which user, solving which problem, with which data and governance limits, measured how. The output is a set of prioritized AI Use Case Cards.

This is also where the Lab's versatility becomes visible. Not all validated use cases point in the same direction — and AI Problem Framing is what reveals which way a given opportunity should go.

Some use cases are about redesigning how employees work: an internal claims process, a document review workflow, a data extraction task that currently takes a team two days. These are workflow problems. Once the use case is clear, the pod runs an AI Workflow Sprint — a focused multi-day process where the full pod spends two days on discovery and solution design: mapping the current workflow, identifying where AI creates genuine value, and converging on a solution concept. Then the pod steps back. A lean Build Trio — an AI engineer, a designer, and a subject-matter expert — builds the prototype on Day 3. Day 4 is testing: structured sessions with real employees, synthesized into a clear Scale / Iterate / Stop decision.

Other use cases are about building something new for customers: a new service, a product feature, an AI-assisted interaction that didn't exist before. These are product problems. The path shifts to an AI Design Sprint, which follows the same structural logic: two days with the full pod on discovery and solution design, a build day with the Build Trio on Day 3, and a test day on Day 4 — this time with customers rather than employees.

The Lab does not prescribe which path to take. It produces the information that makes the choice obvious. AI Problem Framing surfaces whether the opportunity is a workflow problem or a product problem, and the sprint methodology follows from that. The pod never commits to which sprint to run before the use case is defined — because defining the problem and designing the solution are different jobs, and conflating them is where most AI initiatives quietly go wrong.

What stays constant across both paths is the underlying discipline. The full cross-functional pod works together on discovery and solution design. The Build Trio executes fast because the problem is already defined when they start. Testing produces real evidence because the right users are identified in advance. The decision at the end — build, iterate, or stop — rests on what employees or customers did and said, not on a plan that sounded good in a meeting.

.png)

For the system to run, you need one more role: the AI Facilitator. This person runs the workshops, keeps to the playbook, and repeats the cycle on a steady cadence. They make sure the right Discovery Pod is in the room, the right data is available, and there is always a Decider — someone with the authority and interest to act — in all three workshops.

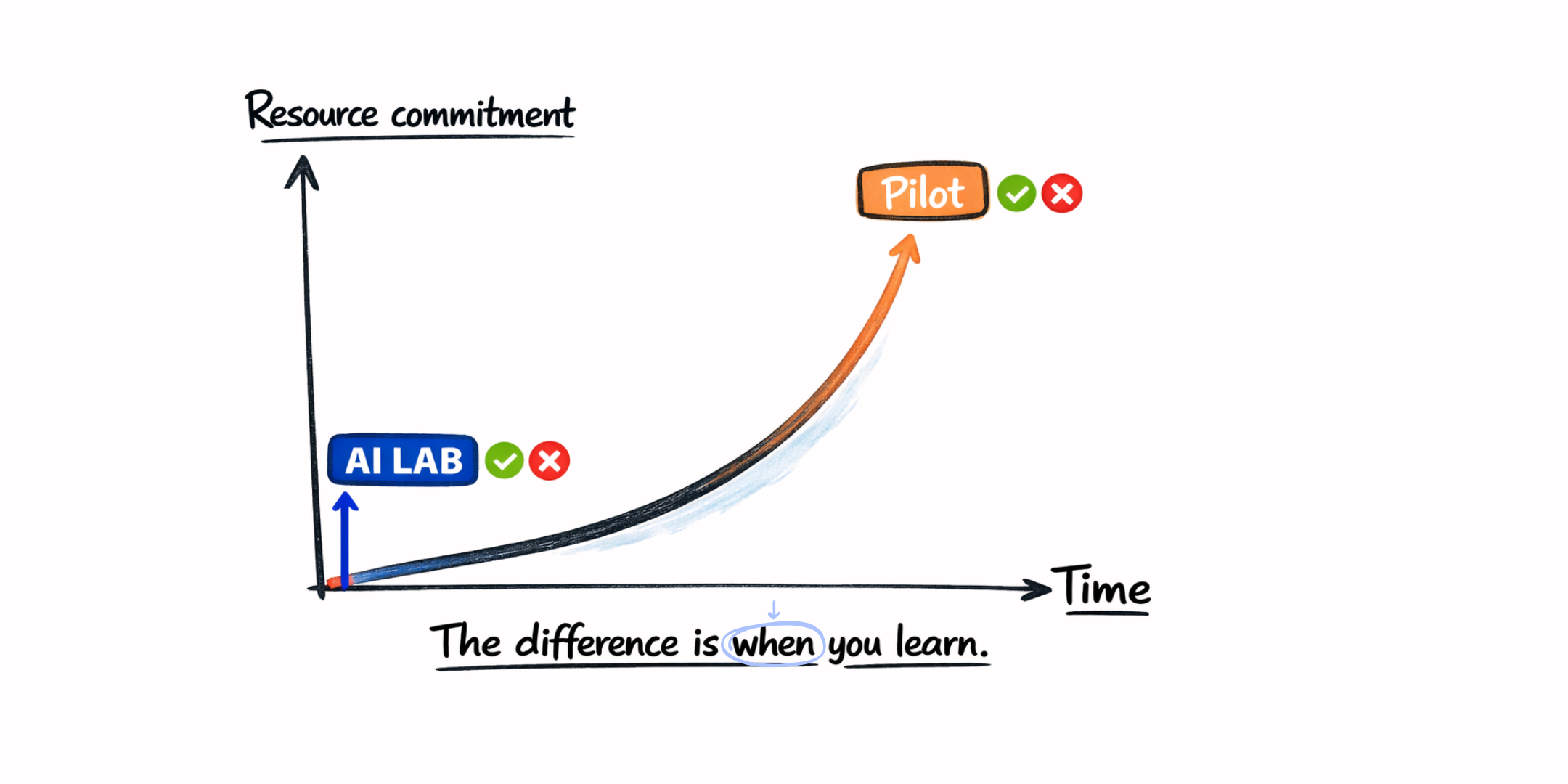

What makes this different from a pilot

A pilot assumes you already know what to build. The conversation is about resourcing: engineering time, vendor spend, and organizational attention to prove a chosen solution works.

The AI Lab runs in the opposite direction. It's built to earn the decision before the spend. A structured session with the right people costs a fraction of a pilot — and can answer the most important question: is this worth building at all?

The "let's run a pilot" mindset skips a step. Teams jump to shipping before they've pressure-tested the problem or the design. It feels faster, but pilots hit walls quickly: first, systems and data integration; then legal and compliance.

In the Lab, you test the problem and the solution against the barriers you'll face later: data access, technical feasibility, legal and compliance constraints, and how users actually think and work. You don't design a perfect solution in a vacuum. You design something that can survive the real constraints.

A healthy AI Lab kills more ideas than it advances. That's not a flaw — it's the system working. Every use case that fails structured evaluation is a six-month pilot you didn't need. The kill rate is the number to watch: not as proof things went wrong, but as proof the organization is making faster, cheaper, better decisions.

That's what the Lab produces. Not workshops. Not a backlog of ideas. Organizational clarity: a repeatable way to answer the question every executive keeps asking — what should we build with AI?

The structural logic — and why it compounds

The AI Lab is not a one-time engagement. It's an operating model: a repeatable loop that runs alongside the business without hijacking it.

You can run multiple pods at once. Different business units can operate their own Labs under the same governance structure. Each session ends with a decision. Each decision feeds the next. Over time, you build a portfolio of use cases — some in production, some in build, many stopped early — and a clear view of where AI is creating value and where it isn't.

The internal facilitators running the Labs accumulate knowledge with every session. They understand the organization's data landscape better. They know which stakeholders slow decisions down and which ones unblock them. They know where the constraints are before anyone has to ask. That knowledge doesn't walk out the door when a consulting project ends. It stays inside the organization and compounds.

AI frontier labs improve what models can do. The AI Lab improves something else: the organization's ability to decide what to do with what models can do.

As the models get better — and they will — the Lab doesn't need to change its job. It simply points at what's newly possible. The engine isn't tied to a vendor or a tool. It just keeps running.

Where to start

Start with one real challenge: a painful problem with a business owner who cares about the outcome and a cross-functional team that can be assembled around it.

Not the biggest AI bet in the company. Not the use case that looks best on a board slide. Pick something concrete — where a clear build / iterate / stop decision would be worth having.

That first Lab does two things.

It produces an outcome: a validated use case you can build, or a stopped one you don't waste months on. Both are valuable.

And it proves what a well-run Lab feels like: the right people in the room, the real constraints on the table before anyone falls in love with a solution, and a decision in days that would otherwise take months.

That's what changes the leadership conversation. Not another AI strategy deck. A session that produced a decision.

From there, the Lab scales on its own cadence.