AI ideas vs. validated AI use cases: why enterprise teams confuse the two

The 200-ideas problem

Most Directors and Heads of Innovation we work with have some version of the same list sitting on their desk. It might live in Notion, on a Miro board, or in an Excel sheet someone on the team keeps updated. It’s usually called something like AI Opportunities, Generative AI Backlog, or AI Initiatives 2026.

It usually has anywhere from 30 to 300 entries.

Ask the team how many of those are validated AI use cases, and the answer comes back fast: all of them. Ask how many have a clearly defined customer, a measurable business goal, and an AI intervention a cross-functional group has tested together for feasibility, and the answer changes. The room gets quieter.

This is the gap the article is about. The word use case is doing too much work. Most teams use it for both the unvalidated list on the wall and the smaller set of opportunities a group has agreed to pursue. The cost of this ambiguity shows up later, in projects that never should have left the backlog. The distinction worth keeping is the one between an AI idea and a validated AI use case. It looks like a vocabulary problem. It’s a decision problem with a vocabulary symptom.

What is an AI idea?

An AI idea is a candidate. It’s the raw material showing up inside your organization every week, from a leadership request, a vendor demo, an internal hackathon, a competitor announcement, or a Slack message from someone who saw a LinkedIn post.

Some examples of AI ideas, written the way they usually appear in real backlogs:

- Use AI to summarize customer support tickets.

- Build an AI assistant for our sales team.

- Automate onboarding with generative AI.

- Apply AI to the legal review process.

Each one points to an area where AI sounds useful. None of them tells you what problem is being solved, who it’s for, how big the issue is, what evidence supports it, or which business goal it serves.

An AI idea is a hypothesis about where AI has relevance. Useful, yes, but only as an input. Treating it like a deliverable is how teams lose months.

What is a validated AI use case?

A validated AI use case is a decision, made by a cross-functional group with the right expertise, data, and authority, that an AI opportunity deserves resources. It comes from a deliberate decision-making process.

It answers the four questions every senior decision-maker asks before signing off on resources:

- Who exactly is this for? Not “our customers” or “the sales team.” A specific minimum viable segment, the smallest group worth solving for first, but still large and important enough to move a real metric.

- What problem does this segment have? Defined from their reality, not from your assumption. Grounded in the customer journey or operational reality where the problem shows up.

- What is the AI intervention? A specific description of what AI does. Does it assist a person, improve a decision, or automate an action? It also needs to include what makes it work, such as the data, the integration, and the human in the loop.

- What business goal does it move, and how will we know? Tied to an existing OKR or strategic priority, with a measurable outcome and a leading indicator the team tracks from week one.

Notice what these four questions assume about the team. Question one needs someone close to the customer or end user. Question two needs domain expertise and an honest read on the operating reality. Question three needs AI technical literacy and a feasibility view grounded in the data and constraints already present. Question four needs a senior decision-maker who ties the use case to a strategic priority and puts resources behind it. When one of these perspectives is missing, the answers stay theoretical, and the team produces a well-formatted idea, not a validated use case.

This is why one person filling in a card alone, no matter how thoughtful, doesn’t produce a validated AI use case. The structure helps. The structure doesn’t do the work. The work is the cross-functional conversation, with the right inputs and the right authority, turning four questions from a checklist into a decision the room defends afterward.

A validated use case carries this structure and this provenance. An idea does not. That’s the whole difference, and it’s why the word use case, used loosely, has stopped meaning much in most enterprise AI conversations.

Why the confusion is structural

If the distinction is this clear, why do experienced teams still blur it?

There are at least three reasons:

The first is speed pressure. Most Directors with an AI mandate are working against a quarterly deadline. The fastest way to show progress is to fill a backlog. A long list of ideas looks like visible activity. A short list of validated use cases takes more work and looks less impressive in the executive update, until someone asks which one is ready to ship. Then the whole picture changes.

The second is the demo problem. AI vendors and internal champions often make the same move: they show a working demo of an idea, usually inside one team that loves it. That demo then gets called a use case in every slide deck. But a demo working for one team, with one person’s tacit knowledge, against one carefully chosen dataset, is the AI version of a science fair project. It proves the idea is interesting. It doesn’t prove the use case is validated.

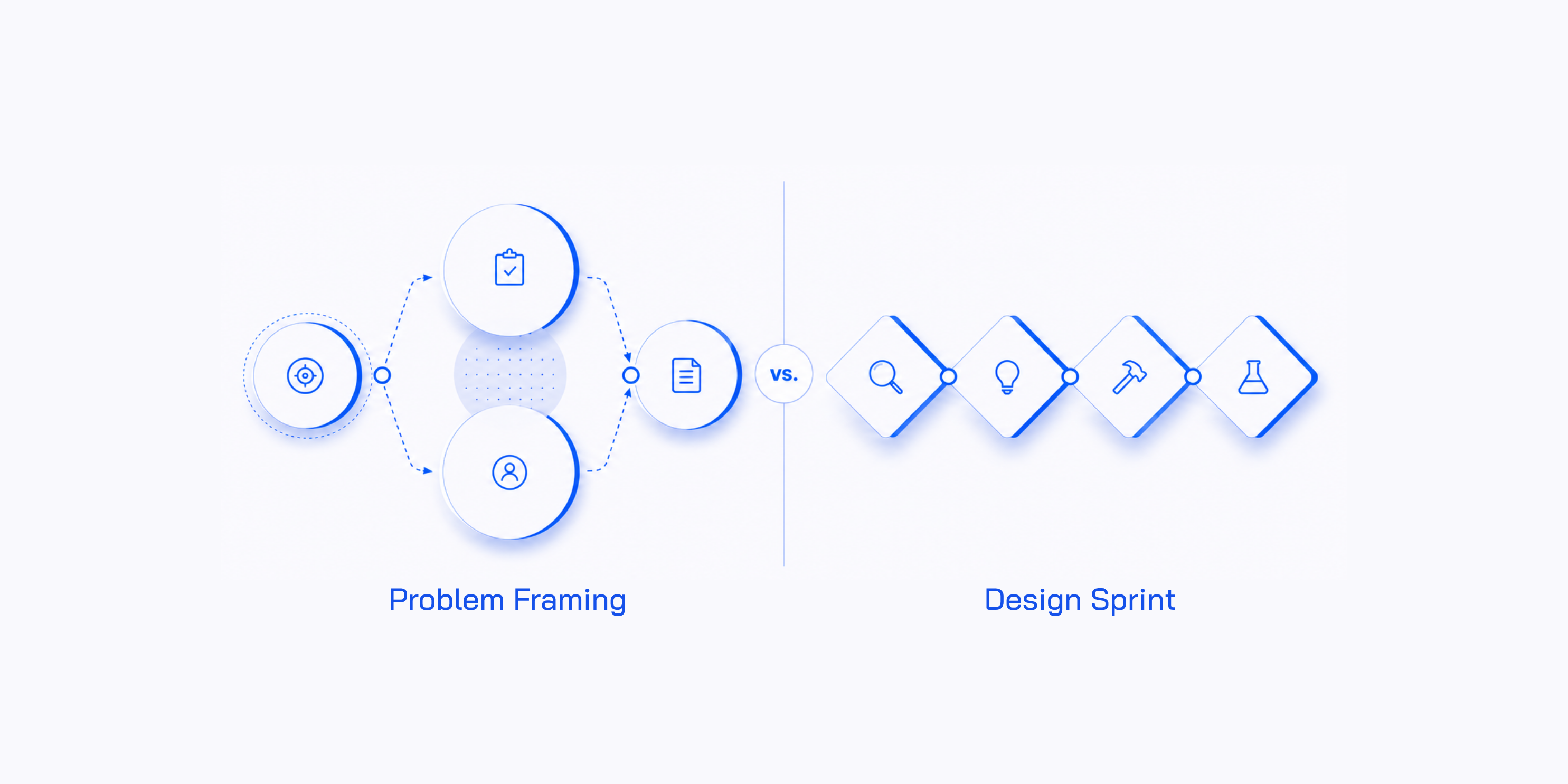

The third is methodological. Most teams don’t have a structured way to turn an idea into a validated use case. They have ideation tools, like brainstorms, hackathons, and idea boards. They have build tools, like sprints, agile ceremonies, and engineering tickets. The missing layer sits in the middle, where a cross-functional group with the right expertise, data, and authority turns an idea into a defined, prioritized, decision-ready use case. This is what AI Problem Framing is built to do. Without that layer, ideas move straight from the backlog into the build queue, and the validation gap shows up six months later in production.

What changes when you separate the two

Once the distinction is clear, the team’s work changes in a few specific ways.

The backlog becomes useful again. Instead of an endless list of equal-weight items, it becomes two lists: ideas pending evaluation and validated use cases ready to move forward. Movement between those lists is the real work, not a bureaucratic step.

Executive conversations get sharper. The question how many AI initiatives are we running? becomes the wrong thing to optimize for. The better question is how many validated AI use cases do we have, and which one creates the most strategic value per euro committed? Leaders who answer with evidence are the ones whose AI programs survive the first budget review.

The team stops debating ideas in the abstract. The four questions above turn opinion fights into evidence conversations. People with strong opinions and no data need to gather the data or step back. The room gets calmer, and decisions get faster.

How to triage your existing list

You probably already have the list. The fastest move is not to start a new process. It’s to run the existing list through three steps, in this order. Each step makes the list smaller and sharpens what remains.

Step 1: get every idea into the same format

Most AI backlogs are a mix of one-line slogans, paragraphs, slide titles, and rough Miro stickies. You can’t compare ideas described at five different levels of detail.

The first move is to put every idea into a shared template. The AI Problem Framing Canvas is the one we use, but any structured one-pager that forces the four questions will work. Ask the people who submitted the ideas to refile them through that template themselves.

This is the move most teams skip. It does two things at once. It gives you a clean, comparable list, and it makes each idea owner do the first round of evaluation on their own thinking. Some ideas won’t even get submitted again. The person sits down to fill in the segment and the business goal, realizes they don’t have the answers, and quietly drops it. That’s the canvas doing your triage work before the team spends a minute reviewing anything.

Step 2: sort what survives by business goal

With every remaining idea expressed in the same format, the next question is: which business goal does each one move?

For a small list, up to 30 ideas, you can do this by hand around a table. For a longer list, 100, 200, or the kind of backlog enterprise teams tend to have, use my AI Portfolio Manager custom GPT to cluster ideas against your strategic goals and show where the density is. The output is the same either way: an organized list of AI ideas, all expressed in the same format, grouped by the strategic priority they support.

This is where the second round of attrition happens. Ideas that sounded interesting show up as orphans, not tied to any priority leadership is paying attention to. Other ideas start clustering together and reveal a real opportunity area the team hadn’t named yet.

What you have at the end of step two is not a list of validated use cases yet. It’s a triaged list of AI ideas, framed consistently and sorted by strategic priority. The list is shorter, sharper, and ready for the conversation that produces validated use cases.

Step 3: take the survivors into an AI Problem Framing workshop

Now you bring the cross-functional group together. A senior decision-maker who commits resources. Domain experts who understand the business challenge or AI opportunity leadership has gathered the group around. Someone with AI technical literacy and an honest view of feasibility. An AI facilitator who keeps the four questions disciplined. This is the team.

Read more about what this team should look like in this article: AI Discovery Pods.

The team stress-tests the strongest ideas from step two across several lenses, including customer reality, business impact, technical feasibility, data availability, compliance, and operational risk. Most ideas don’t survive this conversation. That’s the point.

What comes out the other side is two or three validated AI use cases the team has committed to. Not twenty. Not ten. Two or three. Each one carries the structure of the four questions and the provenance of having been validated by the right team in the same room. That’s the difference between an idea on a template and a use case the organization defends afterward.

The full arc, from 100+ raw ideas in a backlog to two or three validated use cases ready to move forward, takes most teams about two weeks. Step 1 is the bulk of that, 1–2 weeks of calendar time waiting for people to refile their ideas through the canvas. Step 2 is one day of decision-maker time, sorting what survives by business goal. Step 3 is one well-prepared workshop day (the AI Problem Framing workshop). It’s the cheapest way we know to recover months of misallocated team time.

The point we’re making

This distinction matters because the difference between an AI idea and a validated AI use case is the difference between an AI program that produces activity and one that produces outcomes.

A team running on AI ideas ships a portfolio of features and a steady stream of small wins, but struggles when leadership asks which ones are creating measurable business value. A team running on validated AI use cases ships fewer things, with more confidence, against measurable goals, and tends to earn the next round of investment when budgets tighten.

You don’t need a transformation program to make the shift. You need a clear definition of a validated use case, the discipline to apply it, the right people in the room, and a structured way to turn your existing ideas into the smaller number of opportunities that deserve real investment. For most teams, that’s a two-week move, not a two-year one.

Want to go deeper on AI Problem Framing?

Watch the webinar on the AI Problem Framing Canvas (40 minutes) for the diagnostic version of what's in this article — the three default decision modes that take over when AI ideas can't be compared, and the canvas that fixes the underlying problem.