The Playbook for AI Transformation

Most organizations are two or more years into their AI journeys. For many, adoption is going well: licenses bought, pilots launched, people trained. And yet, when leadership looks at what the investment is actually returning, the honest answer is: not much.

Harvard Business Review recently published a piece by a team from Bain and OpenAI naming this problem precisely — what they call the "micro-productivity trap," where AI accelerates tasks without transforming workflows, and individual productivity gains never compound into organizational results.

→ Read the full HBR article: How to Move from AI Experimentation to AI Transformation

The diagnosis is right. AI adoption does not translate to AI transformation.

The HBR article clearly articulates what organizations need to do to close that gap. This article is the sequel. It picks up where the HBR piece leaves off and provides the HOW: how to actually do this work inside a real organization, with real cross-functional teams, real politics, and a leadership team that needs something defensible at the next board review.

Having spent ten years inside the rooms where hard organizational decisions get made — at Design Sprint Academy, building the methods that help cross-functional teams align, prioritize, and move — we've seen firsthand what makes this work and what doesn't. For the past two years, we've refined those methods specifically for AI: how organizations identify which problems are worth solving, how they redesign workflows around AI, and how they build the internal capability to keep doing that work without external dependency.

We didn't build this in a theoretical framework. We built it with organizations doing the work.

Turner Construction is the clearest proof of what the full cycle looks like. 11,000 employees. Hundreds of active job sites. Decades of operational knowledge locked in people's heads. They used this methodology to build an AI operating model that now runs without us: 400+ custom AI applications, 70,000+ annual hours of capacity recovered. Last week, they released SafeT Coach — an AI-powered safety tool, free to the entire construction industry — validated with more than 80 field stakeholders and already logging 25,000 interactions across roughly eight million construction workers in the United States.

From aligning leadership on where AI should focus, to identifying specific use cases worth pursuing, to validating solutions with real employees — to a finished product now in the hands of an entire industry.

That's the full cycle. And here's the playbook for any organization that embarks in their AI transformation journey.

The WHAT — and where the HOW begins

The HBR article identifies four steps for successful AI transformation: 1) narrow to the highest-value use cases, 2) reimagine workflows across the organization, 3) engage those closest to the work, 4) and measure what actually matters.

We agree with all four steps. We arrived at the same ones independently, through the same kind of work.

What those four steps don't spell out — because it wasn't the article's job to — is the operational detail behind each one. How do you get the right people aligned on which AI opportunities are actually worth pursuing? How does workflow redesign happen across teams with different incentives, different vocabularies, and different ideas of what AI can do? How do you engage those closest to the work when AI might not be their priority — and when some fear it will make their jobs disappear?

At one of their case companies, even quoting a customer job required coordination across sales, design, and operations — with significant process variation across business units. The authors correctly identify this as a candidate for reinvention. But the coordination itself — surfacing that variation, getting those teams to converge on a redesign, producing a decision rather than a list of follow-ups — is a specific kind of work. It requires structure. It requires facilitation.

And it requires a repeatable system so the organization can keep doing it over and over.That system is what this article describes. It stands on three pillars.

AI Facilitators. AI transformation requires collaboration across business units, technical teams, legal, and the people actually doing the work. That doesn't happen by default. You need someone to orchestrate and facilitate it. Not a consultant who arrives with answers. Not an engineer who builds the solution. An internal operator who does all the preparation work — gathering the information, research, and context that teams need to make informed decisions — assembles the right cross-functional team, facilitates team work, and is accountable not just for the process, but for the quality of the decisions and outcomes that come out of it. We call this role the AI Facilitator. It's the role that makes the rest of the system work — and one that every organization serious about AI transformation will need to build.

AI Discovery Pods. HBR is right that AI transformation requires cross-functional engagement. But organizations are organized in functions and business units, and that won't change — nor should it. The solution isn't reorganization. It's assembly. An AI Discovery Pod is a small, cross-functional team — six to eight people, including frontline workers, domain experts, and technical leads — brought together around one specific AI opportunity. It forms, does its work, makes its decision, and disbands. Temporary by design. The organization doesn't restructure. It just gets the right people in the right room for the right amount of time.

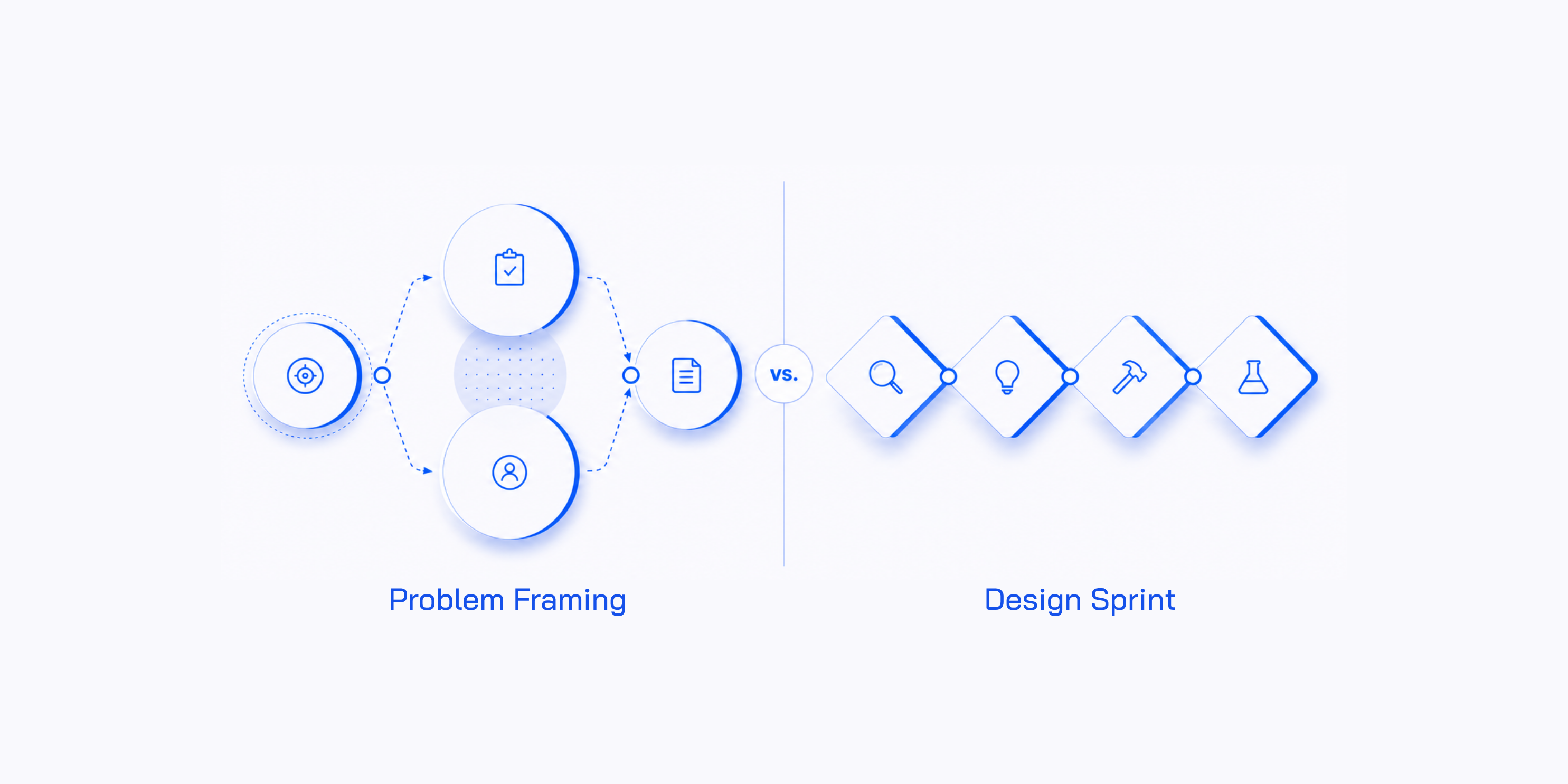

Structured methods. Identifying which AI opportunities are worth pursuing, redesigning the workflows around them, validating the solution before anyone commits serious budget — this work needs to happen not once, but continuously, across teams and business units. That requires methods structured enough to be learned, repeated, and scaled: the same format every time, applied to a different problem. Two methods do this work. AI Problem Framing turns AI ambition into prioritized use cases in a single day. The AI Workflow Sprint takes a validated use case and produces a tested, working prototype of the redesigned workflow in four days — ending in a clear build, iterate, or stop decision.

Together, these three pillars form a single operating model: the AI Lab. A structured, repeatable system that runs parallel to the core business, continuously answering one question — what is worth building with AI, and what is not — before anyone spends serious money finding out the hard way.

The next sections cover each in turn.

The AI Facilitator — the operator every organization will need

Organizations are already hiring for this role. They just don't know what to call it.

Read enough AI transformation job descriptions and a pattern emerges. The titles vary — AI Transformation Lead, AI Adoption Manager, AI Champion, AI Strategy Director. But the requirements are almost always the same: align strategy across departments, identify and prioritize high-impact AI opportunities, lead cross-functional programs, translate strategic direction into something teams can actually act on. That's a facilitation job.

The harder problem is that many are searching for a unicorn — someone who can drive complex cross-functional work and is also a deep AI expert. That's the wrong specification. The AI Facilitator doesn't need to be a technical AI expert. They need to be fluent enough in AI to follow the conversations, ask the right questions, and recognize when a proposed solution doesn't match the actual constraint. Fluency, not expertise. The engineering depth sits elsewhere in the AI Discovery Pod.

What the role actually demands is something most organizations underestimate: it's a full-time job. The preparation work alone — gathering context, interviewing domain experts, understanding the business goals, making sure the right people and the right evidence are in the room before a session starts — takes significant time. Add the facilitation itself, then the handoff to implementation teams, the documentation, the follow-through to make sure decisions actually move. This is not a side-of-desk responsibility.

There's another reason this needs to be a dedicated role, and it compounds over time. An AI Facilitator who drives ten AI initiatives knows the organization in a way no external consultant ever will. They understand the political landscape, the domain experts worth trusting, the assumptions worth challenging. Every session they run makes the next one faster, sharper, and more useful. That knowledge doesn't transfer if the role rotates or gets absorbed into someone's existing job description. And if the person doing this work is measured by other metrics — a product roadmap, a team OKR, a delivery target — the AI facilitation will always lose. You get the role without the commitment, and the results to match.

It’s not common for organizations to have a dedicated facilitation team — which is part of why AI transformation keeps stalling at the coordination layer. But a team of five to ten trained AI Facilitators, embedded inside a large enterprise, working with AI Discovery Pods across business units continuously, is not an expensive proposition relative to the AI investment those organizations are already making.

It's the infrastructure that makes the rest of the investment pay off.

→ For a deeper look at the role: What is an AI Facilitator?

AI Discovery Pods — the cross-functional team that makes it work

Every step the HBR article prescribes requires people from different functions working together. Narrowing to the highest-value use cases requires domain experts, technical leads, and business stakeholders in the same conversation. Reimagining workflows requires the people who actually run those workflows — not just the people who manage them. Engaging those closest to the work means pulling in frontline employees, data engineers, legal, and operations, often simultaneously.

The problem is that organizations aren't built for this. They're organized by function and business unit — by design, and for good reason. That structure is how work gets done. But it's also why AI initiatives keep running into the same coordination failure: use cases get prioritized without the data team's input, workflows get redesigned without compliance in the room, solutions get built without the people who'll actually use them ever being consulted. The blockers that should have been named on day one show up at month four.

The AI Discovery Pod is the structural answer. A small, cross-functional team — six to eight people — assembled around one specific AI opportunity. Domain experts, technical leads, data engineers, the employees whose workflow is being redesigned, legal where relevant.

One role is non-negotiable: a senior decision-maker with the authority to commit. This is the business unit leader, the executive sponsor, the VP accountable for the outcome — whoever owns the problem and will be held responsible for what gets built. Without them, the session produces conclusions nobody can act on. The whole point of assembling the right people is to reach a decision that carries weight and moves forward — and that only happens if the person who can say yes is in the room.

The pod forms, does its work, makes its decision, and disbands. Nobody joins a committee. Nobody's regular job disappears. The organization doesn't restructure. It just gets the right people in the right room for the right amount of time — with a clear finish line.

The composition matters as much as the concept. Research on collective intelligence consistently shows that group dynamics can explain up to 40% of the variance in team outcomes, independent of individual talent. A well-assembled pod that integrates different perspectives and tests assumptions before committing to them will outperform a room of domain experts who defend their positions. The difference isn't who's in the room. It's how the room works.

The pod doesn't ship. That's not its job. Its job is discovery and validation — choosing which use cases are worth pursuing, building a prototype, testing it with real users, and producing a clear decision before serious resources are committed. When the session ends, the pod hands off to production teams from a position of evidence, not guesswork.

→ Why AI initiatives need AI Discovery Pods: read the full article

From infrastructure to execution

Let's step back for a minute. The HBR article is clear that AI transformation requires cross-functional work — narrowing use cases, reimagining workflows, and engaging those closest to the process all require people from different functions working together. We've made that concrete: AI Facilitators as the internal operators that orchestrate and own the process, and AI Discovery Pods as the structural answer to how that cross-functional work actually happens — assembled around a specific opportunity, temporary by design, with a clear finish line.

Now the question becomes: what does the work actually look like? How does an organization move from AI ambition to validated use cases to redesigned workflows, in a way that's possible to repeat across teams and business units with predictable outcomes every time?

That's what comes next. Two methods, run in sequence and cadence, each with a clear input, a defined process, and a decision at the end.

.png)

AI Problem Framing — narrowing to what's actually worth building

HBR's first step is to narrow possibilities strategically — to resist the urge to spread AI everywhere and instead identify the four or five domains where transformation efforts should concentrate. One of their case studies followed this approach — a cross-functional team ran a week-long workshop surfacing AI use cases representing tens of millions in potential value — and is now on track for $30M in additional profit.

The principle is right. HBR's answer is a workshop. So is ours.

AI Problem Framing is a structured one-day session. The AI Facilitator assembles the right team — six to eight people, including domain experts, technical leads, and a senior decision-maker with authority to commit — does the preparation work to ensure the group has the information it needs, and then runs the session through a specific sequence: surface all AI possibilities, link them to real business goals and metrics, understand the human experience they'd affect, audit data readiness and constraints, then score, prioritize, and select.

The output is not a collection of scattered ideas. A session produces a few AI Use Cases — each one fully scoped, with the reasoning recorded, the constraints documented, and the success metrics defined. Rigorous enough to defend at board level, and ready to move forward to solution validation.

What surprises leaders most about this process is what gets eliminated. A well-run session typically kills two-thirds of the ideas that walk in — not through opinion or gut feel, but through a structured set of criteria that evaluate each opportunity against business value, feasibility, data readiness, and real constraints. The AI Facilitator is accountable (as is the team) for the quality of those decisions — through the preparation, the session design, and the rigor applied in the room. Ideas that don't survive that scrutiny don't survive for a reason.

At Turner, the first AI Problem Framing session was with Directors and VPs. Before a single sprint ran, leadership aligned on which problems were actually worth solving. That alignment was the foundation for everything that followed.

→ How to define AI use cases in practice: read the full guide

.png)

AI Workflow Sprint — redesigning the workflow

HBR's second step is to reimagine workflows across the organization. Their observation is worth stating directly: the process redesign — not the technology — is the most challenging part of AI deployment, and creates most of the value.

They're right. And the reason it's so hard is structural. AI sits between two groups that rarely work closely enough together. Technical teams understand models, infrastructure, and data. Business teams understand the workflows, the pressure points, the compliance realities, and the outcomes that actually matter. Both sides are needed. Most organizations have no reliable way to bring them together around the same problem. And when they don't — when AI gets layered onto a workflow that was never redesigned — individuals may gain productivity on specific tasks, but those gains stall at the organizational level. The surrounding workflow still depends on manual handoffs, tacit knowledge, and systems not built for AI. The task moves faster but bottlenecks just shift downstream.

The AI Workflow Sprint is built for exactly this. Not the technology problem — the coordination problem.

A four-day workshop. A cross-functional AI Discovery Pod, now focused on a single workflow. The first two days require the full Discovery Pod working together — mapping the current workflow as it actually works (not how it's supposed to work), identifying where time and decisions are genuinely lost, and redesigning the workflow with AI integrated. Day three shifts to a smaller build trio — freeing most of the team to return to their day jobs while a working AI agent MVP is built. Day four is validation: structured sessions with real employees, synthesized findings, and a Decider decision. Scale, iterate, or stop.

That last part — the explicit stop option — is what makes the sprint different from a typical AI initiative. The default in most organizations is continuation. Momentum carries a weak pilot into production because nobody built a formal mechanism for stopping it. The sprint builds that mechanism in from day one.

The AI Facilitator is present throughout — but does the most critical work before anyone enters the room. Interviewing the people whose workflow is being redesigned, auditing the data, understanding the constraints. By the time the Discovery Pod assembles, they're working from reality, not assumptions.

The output is concrete: a working AI agent MVP tested with real employees, a documented long-term goal and success metrics agreed before any building began, and a decision backed by evidence.

Turner ran three AI Workflow Sprints across three teams, in parallel. Each produced a validated application and a clear decision. One of those applications was SafeT Coach — a tool that answers plain-language safety questions grounded in Turner's EH&S standards, evaluates jobsite photos for hazards in real time, and generates coaching prompts for field conversations. Last week Turner made it free to the entire construction industry. It's already logged 25,000 interactions.

→ What is the AI Workflow Sprint: read the full article

The AI Lab — the operating model that makes it compound

HBR's fourth step is to measure what actually matters. Most organizations can't do this — not because they lack data, but because they have no coherent system producing it. Scattered pilots and disconnected workshops don't generate comparable metrics. You can't track what you can't repeat.

This is where the AI Lab comes in. Not as another workshop, and not as a program with a start date and an end date. As a permanent AI operating model.

One workshop can produce a validated use case. Two workshops can redesign a workflow. But AI transformation — the kind that shows up at board level as measurable ROI — doesn't come from individual sessions. It comes from running them continuously, across teams and business units, as a cadence. One flower doesn't make spring. And the compounding value of a system isn't visible until the system has been running long enough to generate signal.

The AI Lab is that system. It brings together everything described in this article — AI Facilitators, AI Discovery Pods, AI Problem Framing, AI Workflow Sprints — and runs them as a repeatable operating model, parallel to the core business. While the organization keeps delivering, the AI Lab keeps discovering. Continuously answering one question: what is worth building with AI, and what is not — before anyone commits serious resources.

And because the AI Lab runs on structure, it generates the metrics leadership can actually use. It tracks progress across three horizons:

Horizon 1 — Are we deciding fast? Labs run per quarter, time from session to decision, early kill rate. A kill rate above 60% is a sign of a healthy system — not failure. Every idea killed in a two-day Lab is a six-month project that never happened.

Horizon 2 — Are we building capability? Number of AI Facilitators active, Labs run independently, business unit coverage. This is the spread metric — a Lab running across five business units is becoming organizational infrastructure.

Horizon 3 — Are we producing value? Validated ideas in development, solutions live in production, ROI from deployed solutions. The AI Lab pays for itself when the pipeline-to-deployment rate compounds quarter over quarter.

These three horizons answer HBR's fourth step directly. Not with vanity metrics — activity reports and adoption statistics — but with evidence of decisions made, capability built, and value produced.

Turner has all three. 400+ AI applications built internally. 70,000+ hours of annual capacity recovered. An AI Lab operating model running without us — and now producing products that reach the industry. That's not the result of a workshop. That's the result of a system.

→ The AI Lab — the system behind successful AI transformation: read the full article

What this means if you're responsible for AI transformation

Let's recap. HBR identified four steps. Here's how this playbook picks up each one.

1) Narrow to the highest-value use cases — AI Problem Framing. One structured day, the right cross-functional team, a few fully scoped AI Use Cases ready for validation. Not a brainstorm. A decision.

2) Reimagine workflows across the organization — the AI Workflow Sprint. Four days, a Discovery Pod focused on a single workflow, a working AI agent MVP tested with real employees, and a Scale / Iterate / Stop decision backed by evidence.

3) Engage those closest to the work — AI Discovery Pods. Cross-functional teams assembled around specific opportunities, temporary by design, with a Decider in the room. The right people, the right problem, a clear finish line.

4) Measure what actually matters — the AI Lab. Three horizons: decision speed, capability growth, value in production. Metrics that compound quarter over quarter, not activity reports that go nowhere.

The methods are documented and battle-tested, the facilitation guides are built, and the training exists to install this capability inside your organization from day one — so your teams can run it without depending on us.

If you need a hundred-page strategy document or a multi-year consulting engagement, there are firms built for that. What we do is different — and more specific. We install the decision-making infrastructure that makes AI investment pay off: the structured, facilitated, repeatable system that sits between AI strategy and AI execution — the missing middle most organizations haven't built yet.

The organizations that will lead in AI over the next five years will be the ones that built the system for deciding what to build — and kept it running.