The AI Lab: the system behind successful AI transformation

You're doing AI transformation. It's probably not working.

Not because you lack ambition. Not because your team isn't trying. And definitely not because the technology isn't good enough.

It's not working because of how you've structured the work around it.

I've spent the last two years helping organizations navigate AI adoption — banks, manufacturers, global consumer goods companies, construction firms. Across all of them, regardless of industry or budget, I keep seeing the same three failure patterns. They account for most of the wasted investment in enterprise AI right now.

The first: you invested in tools and training, and expected transformation to follow.

This is where almost every organization starts. AI training and literacy programs rolled out across the workforce. It feels like progress because it is progress — people are learning, and that matters.

But here's what happens next. People apply AI to what they already do. They automate existing workflows, speed up existing processes, occasionally build something clever that nobody asked for. The broken processes they worked around for years, they now work around slightly faster.

A large bank in Asia described this to me recently. They had done the training — responsible AI videos, Copilot webinars, prompt crafting workshops, all of it. What they got back were AI use cases that were either far too large to execute or too small to be worth solving. As one of their leaders put it: "They don't know what they don't know. Whatever they come up with is just what they can superficially think of."

Tools and training are necessary. They are not sufficient.

The second: you built a team of experts nobody could talk to.

A global manufacturing company did everything right, at least by conventional wisdom. They set up a dedicated AI team inside IT — PhDs, serious technical firepower, full leadership backing, and billions in projected savings. Not millions. Billions.

One year later, the team was disbanded.

Because the team couldn't speak to the business. They made enormous claims — "your division, we can save $200 millions" — but couldn't turn those claims into decisions people trusted or workflows people actually adopted. As the senior leader who told me this story put it: "It created a lot of animosity. They couldn't convince stakeholders. They couldn't turn it into reality."

What started as ambition ended with the team broken up and the organization back to square one.

The third: you let experimentation run everywhere and called it a strategy.

This pattern looks like the opposite of the second. No centralized team, no top-down mandate. Instead, AI experimentation distributed across the organization — trainings here, pilots there, use cases that nobody asked for, champions in different business units each trying things on their own. It can feel like momentum. But two years pass, money gets spent, and the honest conclusion tends to sound like what a senior leader at a global FMCG company told me recently: "For the last two years we've been doing a bottom-up approach — we tried many things, trainings here, experiments there. But now we know we need to be more intentional."

Two years. Significant investment. And now starting over.

These three patterns look different on the surface. One organization over-centralizes. Another over-distributes. A third mistakes activity for strategy. But they all share the same root cause — the absence of a structured system for deciding what's worth building before you build it.

The real problem isn't the technology. It's the decision-making.

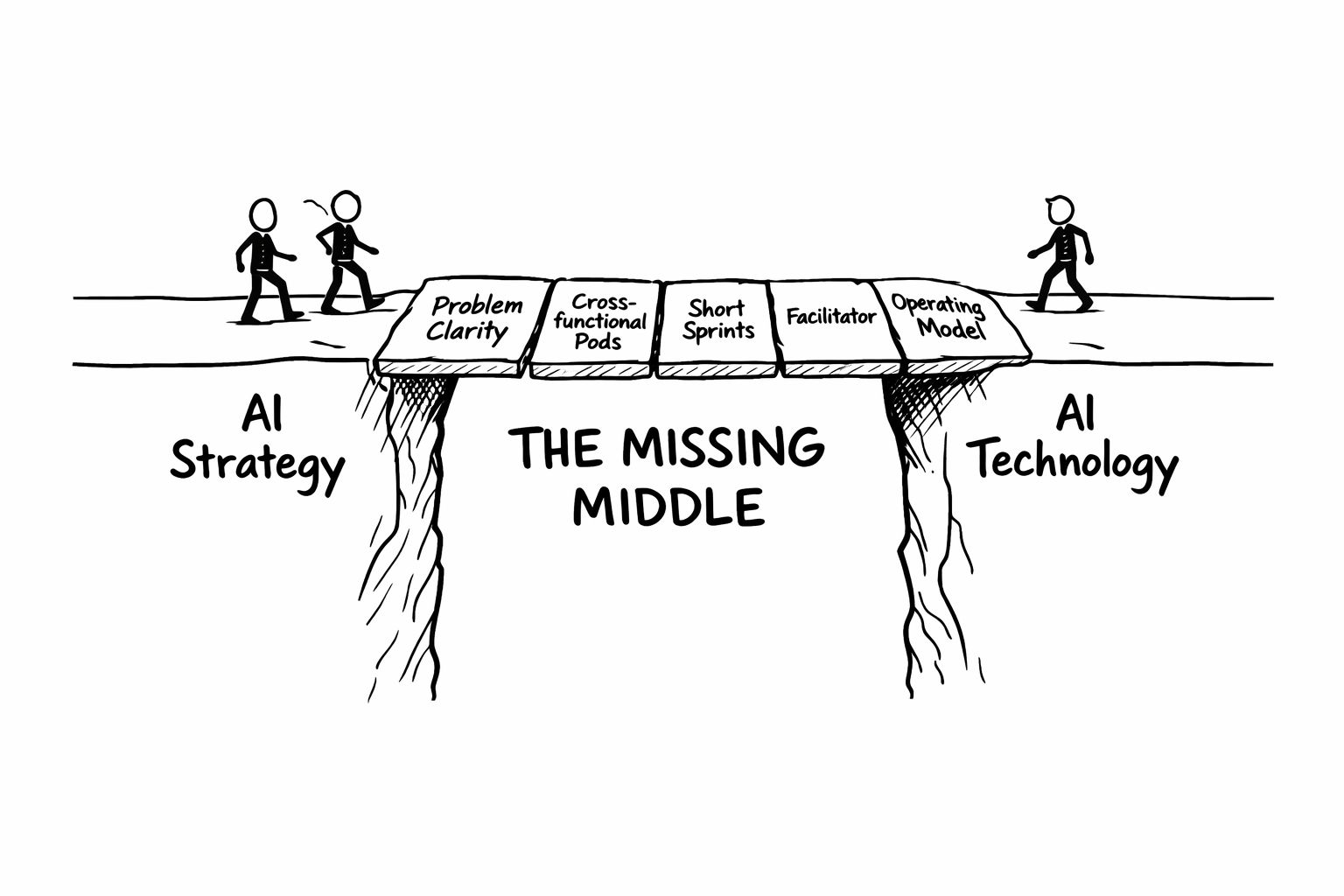

Between AI strategy and AI execution, there is a gap.

Not a technical gap. A thinking and translation gap. The messy, cross-functional space where someone needs to answer questions that no model, no tool, and no AI team can answer on their own — questions about workflows, business value, data readiness, risk, governance, ethics, and who this is actually being built for. These aren't engineering questions. They require business leaders, domain experts, legal and compliance voices, and technical people — all in the room together, thinking through the same problem at the same time.

This is the missing middle. Not the AI strategy, which most organizations have in some form. Not the technology, which is more accessible than ever. The structured, cross-functional decision-making layer that sits between them — where use cases get defined, prioritized, and either validated or killed before a single line of code gets written.

Without it, the three patterns above are almost inevitable. Tools and training give people capability without direction. Expert teams build solutions nobody asked for. Distributed experimentation produces activity without outcomes. All three fail at exactly the same point — the moment someone needs to decide should we build this, for whom, measured how — and there is no system in place to answer it.

The organizations actually seeing ROI aren't moving faster. They're thinking harder upfront. They're doing the boring work of defining where value is lost, where decisions are slow, where human effort is wasted on low-leverage tasks — and where AI can unlock outcomes that were simply not possible before.

Then, and only then, they're bringing in AI.

That discipline doesn't happen by accident. It requires a structure. And people whose job it is to make that structure work.

That structure is what we call an AI Lab.

What an AI Lab actually is

An AI Lab is not a team. It is not a department, a center of excellence, or a digital transformation workstream. It is not another layer of governance sitting between leadership and execution.

Those things exist in most organizations already. They are not working fast enough.

An AI Lab is an exploration engine — a structured, repeatable system that runs parallel to your core business, not inside it.

This distinction matters more than it might sound. McKinsey's research on organizational ambidexterity makes the point plainly: companies that win over time are the ones that learn to do two things simultaneously — exploit what works today, and explore what will work tomorrow. Most organizations are excellent at exploitation. They have optimized processes, delivery teams, KPIs, and governance built around executing on what's already known. What they haven't built is a parallel system for discovery — for figuring out what's worth building before they commit to building it.

The AI Lab is that parallel system. While the rest of the organization keeps running, the Lab is discovering. It answers one question — what is worth building with AI, and what is not — before anyone spends serious money finding out the hard way.

And that second part is as important as the first. A healthy AI Lab kills more ideas than it ships. That is not a failure mode. It is the point. Every idea killed in a two-day Lab is a six-month project that never happened. Every use case that doesn't survive scrutiny on data readiness, business value, or governance is a pilot that won't need to be quietly shelved later. The kill rate is a metric to be proud of, not hidden.

This is also what separates an AI Lab from what most organizations currently do with AI — a series of disconnected experiments with no shared standard for what success looks like and no mechanism for learning across them. The Lab has metrics. Leadership can see, at any moment, how many Labs have run, how many ideas were generated, how many were killed, how many moved to production, which business units are engaged, and how many people across the organization have been part of the process. It is not a black box of innovation activity. It is a measurable operating model.

An AI Lab is built on three things: AI Discovery Pods, AI Facilitators, and a repeatable workshop cadence. Each one is necessary. None of them works without the other two.

.png)

1. AI Discovery Pods — teams formed around problems, not functions

The first instinct in most organizations is to assign AI projects to existing teams — IT, the innovation group, the AI center of excellence. The problem is that AI doesn't respect functional boundaries. A use case that touches customer service also touches data, legal, operations, and product. By the time all those functions weigh in through the normal channels — meetings, reviews, escalations — months have passed and the window has closed.

An AI Discovery Pod breaks that pattern. It is a small, cross-functional team — typically six to eight people — assembled around one specific AI opportunity. It brings together the business owner accountable for outcomes, the domain expert who knows where the work actually breaks down, the technical lead who can assess feasibility, the legal and compliance voice who can define boundaries from day one, and critically, the AI Champion.

The AI Champion deserves a specific introduction. This person already exists in your organization. They are the analyst who has built GPT workflows nobody asked for. The operations manager who quietly automated half her team's reporting. The HR business partner who figured out how to cut a two-day process to twenty minutes using tools the IT department hasn't officially approved yet. These people are currently invisible to your official AI strategy. The AI Lab makes them central to it.

The Pod is temporary by design. It forms around a problem, does its work, and disbands. It does not require organizational transformation or unnecessary bureaucracy. A focused team, a defined problem, a clear output.

.png)

2. AI workshops — where alignment and decisions happen

Cross-functional teams don't think well together by default. Put a CFO, an ML engineer, a compliance officer, and a product manager in a room and you don't automatically get good decisions — you get the loudest voice, the most senior title, or the slowest consensus. And it is why the AI Lab runs on specialized workshops, not meetings.

The difference matters. A meeting surfaces opinions. A workshop surfaces decisions. But not just any workshop.

The ones inside an AI Lab are purpose-built for AI specifically — not adapted from generic innovation frameworks or design thinking retreats. They are designed around the specific demands of AI initiatives: cross-functional dependencies, data and governance constraints, feasibility questions that only surface when the right technical and legal voices are in the room at the same time. They follow a clear sequence of steps, with defined outputs at each stage, so that what comes out of the room is not a collection of ideas but a decision everyone has contributed to and can stand behind.

These workshops are built to answer two questions that every AI initiative must resolve before building anything:

The first: what problem is worth solving?

This is addressed through AI Problem Framing — a one-day structured session that takes a cross-functional team from vague AI mandate to clearly defined, prioritized use cases. Not "we want to use AI in finance," but something far more specific: which workflow, for which user, solving which problem, measured how, constrained by what data and governance realities. The output is an AI Use Case Card — a concrete, stakeholder-aligned definition of what to build and why. (For a full walkthrough of how this works, see our step-by-step guide to AI Problem Framing.)

The second: what does the solution look like, and will it actually work?

This is where the AI Design Sprint takes over — a focused multi-day process where the team generates solution options, prototypes a direction, and tests it with real users or frontline stakeholders. By the end, the team has evidence — a user-tested concept, a clear build specification, and a decision grounded in reality rather than assumption. (Deep dive: What is an AI Design Sprint?)

The workshops are only as good as the people running through them. A Problem Framing session without decision makers produces use cases the organization will never greenlight. A Design Sprint without AI and technical experts cannot produce solutions worth building. And legal and compliance, if not present from the beginning of both, don't protect the initiative — they kill it at the end, after everyone has already invested time and money. The Discovery Pod exists precisely to prevent this — by the time the group walks into the room, the right composition has already been assembled.

These workshops are not one-off events. They run on a repeatable cadence — the organizational equivalent of a production line for validated AI decisions. And because they are structured and repeatable, they are learnable and scalable. A team trained on these methods can run them consistently across business units, geographies, and problem types. The format doesn't change. The input does. That is what makes it possible to embed an AI Lab across a large organization without reinventing the process every time a new problem surfaces.

3. AI Facilitators — the role that connects everything

The Discovery Pod brings the right people. The workshops provide the structure. But neither works without someone whose job it is to make both happen well. That person is the AI Facilitator.

Most organizations don't have this role. But they know they need it. Open LinkedIn on any given day and you'll find companies hiring for it under different names — Global Head of AI Transformation, AI Adoption Manager, AI Solution Lead. The titles vary. But when you read the job descriptions, the pattern is always the same: someone who can set AI strategy, manage C-level stakeholders, identify AI use-cases, understand data architecture, run cross-functional programs, govern compliance, and deliver outcomes.

All in one person. That person doesn't exist. And even if they did, the role would fail — because someone with deep technical expertise will naturally gravitate toward building, toward architecture decisions, model choices, implementation details. That is what deep experts do. But what the organization actually needs at this stage is not another builder. It is someone whose job is alignment, orchestration, and structured decision-making across functions that rarely talk to each other.

The AI Facilitator is that person. Not a unicorn. A focused role with a good working knowledge of AI — enough to ask the right questions, recognize when constraints are being underestimated, and know who to bring into the room — and fully accountable for the outcome. Not accountable for building the solution, but accountable for making sure the organization gets to the right one.

If the wrong problem got framed, if a weak use case got green-lighted, if the wrong people were in the room — that is on the Facilitator.

This is how every other mature discipline works. A film director does not operate the camera. A surgeon does not manage the hospital. The AI Facilitator owns the process. The domain experts, the engineers, the data scientists, the legal leads — they own their domains. The Facilitator is the one who makes all of those domains converge on the right decision.

In practice, the AI Facilitator's work spans the full lifecycle of a Lab. Before the workshop: mapping workflows, interviewing domain experts, auditing data readiness, compiling research and governance constraints — so that when the group convenes, they are working with reality, not assumptions. Where the organization has UX researchers or service designers, the AI Facilitator works alongside them. Where it doesn't, they do this work themselves. During the workshop: guiding the group, surfacing the right questions, preventing the loudest voice from becoming the default answer. After the workshop: writing the conclusions, preparing the handoff, coordinating across teams, ensuring what was decided actually moves forward.

This is a full-time job. It should be recognized and resourced as one. Most organizations assume someone can drive it part-time alongside their existing responsibilities. That is one of the most common and costly mistakes in AI adoption. The AI Facilitator is not overhead. They are the connective tissue that turns three good ideas — cross-functional teams, structured workshops, a repeatable cadence — into a system that actually produces decisions.

Every company is hiring AI builders right now. Almost none are investing in the people who create the conditions for good AI decisions. That gap is where most transformation efforts quietly fail.

.png)

This is already working.

The AI Lab model is not a framework invented in a consulting whitepaper. It is a response to something organizations are discovering in practice — that the biggest obstacle to AI ROI is not the technology, but the absence of a structured system for deciding what to build with it.

The clearest external validation came in January 2026, when Anthropic — the company building some of the most advanced AI in the world — announced their own AI Labs program. The announcement was notable not for the technology involved, but for the organizational logic behind it. Anthropic recognized that even inside a company whose entire purpose is AI, you still need a dedicated structure for exploration — for bringing the right people together, defining what's worth building, and validating it before committing resources. If the people who build the models need a Lab to decide how to use them, the argument for every other organization becomes difficult to dismiss.

The concept is the same even if the implementation differs. Exploration runs parallel to exploitation. Discovery happens before building. And the structure exists to produce decisions, not just activity.

We have seen the same logic play out with our own clients.

The largest construction company in the United States came to us with a specific ask: help us build our first three AI agents — not as pilots, but as production-ready solutions their IT teams could scale. They had already done the bottom-up work. Organization-wide OpenAI licenses. Experimentation across teams. Individual champions building things in their spare time. All of it useful. None of it cohering into something the business could act on at scale.

We started at the top. A one-day AI Strategy Workshop with the most senior leaders in the company — board level, people who had spent their careers running some of the largest construction projects in the world. The question we put to them was not "what can AI do?" but "where should we focus our AI ambition?" By the end of the day, the answer was a single word: safety. Not a vague aspiration. A strategic mandate with buy-in from the people who controlled the budget and the direction.

With that mandate, we ran an AI Problem Framing workshop with a cross-functional team of safety directors, L&D leads, and field operations managers — people who touched safety from every angle. In one day, three AI use cases emerged. One around training. One around data. One around detecting live safety hazards on active construction sites in real time.

Then the AI Design Sprint. Three teams, three use cases, three days. Each team had a facilitator, an AI expert, and domain specialists who knew construction safety from the inside. They worked with real data — safety policies, incident reports, site documentation. By the end of day three, each team had a working proof of concept, tested against real conditions.

That was two years ago. The company now has over 400 AI agents in production and a deep partnership with OpenAI. The three use cases we defined in that first Lab became the foundation everything else was built on.

The Lab did not replace their technical teams. It gave those teams something to build from

How to start

The good news: this does not require an organizational transformation. No restructuring. No multi-year program. No massive upfront investment. You can start with a single AI Lab, focused on one specific problem, and build from there.

Step 1: Run your first Lab

The starting point is one problem worth solving. Not the most ambitious AI opportunity in the company — a real, specific, painful problem with a business owner who cares about the outcome and a cross-functional team that can be assembled around it.

At Design Sprint Academy, we run this as a focused engagement — typically between two and five days depending on scope. Day one is always AI Problem Framing: a structured session that takes the team from vague AI mandate to clearly defined, prioritized use cases. What follows is the AI Design Sprint — between two and four days depending on the complexity of the use case and how far the team needs to go before a confident build, pause, or kill decision can be made. By the end, the organization has a real decision on the table. And critically, they have experienced what a well-run Lab actually feels like — which is often the most important output of the first one. But one flower does not make spring.

Step 2: Build internal capability

One external facilitator running Labs indefinitely is consulting, not transformation. The goal from day one is internal capability — people inside your organization who own this work, know the methodology, and can run Labs without external support.

This is something we feel strongly about at Design Sprint Academy, and it shapes how we work with clients. Rather than becoming a permanent external dependency, we train internal AI Facilitators — people inside your organization who learn to run the full methodology themselves. They leave not just with the knowledge of how to facilitate AI Problem Framing and AI Design Sprints, but with everything needed to do it consistently: playbooks, facilitation materials, workshop agendas, and supporting resources. The goal is that your facilitators can run Labs independently, with the same quality and structure, whether the problem is in finance, operations, legal, or product.

Step 3: Run Labs under supervision

Knowledge from a training program and confidence in a real organizational environment are two different things. The first Labs your internal facilitators run should not be solo flights. We coach newly trained facilitators through their first engagements — present in the room or available for preparation and debrief — until the process is internalized, the facilitation is tight, and the methodology has been adjusted to fit your specific organizational context.

This supervised practice phase is where the real capability transfer happens. It is also where the Lab gets refined — every organization is different, and the first few sessions under expert guidance reveal the adjustments that make the model work specifically for you. By the end of this phase, your internal facilitators are not following a script. They own the process.

From here, the Lab runs on its own cadence. New problems surface, pods are assembled, workshops run, decisions are made. The pipeline of AI opportunities grows. And because the process is consistent and measurable, leadership has visibility into what the Lab is producing — not just activity, but outcomes.

Why this works

There is no shortage of ways to spend money on AI adoption. Consulting engagements, vendor platforms, training programs, centers of excellence — the options are endless and the invoices are large. Most of them produce something. Few of them produce something that lasts.

The AI Lab model works for a specific reason: it builds capability inside the organization, not outside it.

✅ Internal facilitators know the context — and that changes everything

An external consultant arriving to run an AI initiative needs weeks just to get oriented. Who are the real decision makers. Which data actually exists versus which data people think exists. Which business unit is ready to move and which will create friction. Which VP will greenlight an idea and which will quietly kill it in the next review cycle.

An internal AI Facilitator already knows all of this. They know how the organization operates. They know the political landscape. They know which domain experts to trust and which assumptions to challenge. That context advantage doesn't just produce better decisions — it produces them faster. And every Lab they run makes the next one sharper. The knowledge compounds in a way that external support never can.

✅ You control your own destiny

When AI discovery is owned internally, the organization stops being a passenger in its own transformation. The Lab becomes a permanent exploration engine — running on a repeatable cadence, generating a continuous pipeline of AI opportunities, killing the weak ones early and advancing the strong ones with confidence.

This is the difference between a program and a capability. A program has a start date, an end date, and a final report. A capability runs indefinitely, improves over time, and produces outcomes that accumulate. The AI Lab, when properly embedded, becomes the system through which the organization answers the question "what should we build with AI next" — not once, but continuously.

✅ Vendors belong in the room, not at the front of it

Most large organizations work with IT vendors and implementation partners to build AI solutions. That doesn't change with an AI Lab. What changes is where vendors fit in the process.

In the Lab model, vendors can be integrated into Discovery Pods at the kickoff — bringing their technical expertise into the problem definition and solution design process. But the organization retains ownership of direction. The use case definition, the prioritization, the decision to build or kill — these stay internal. This matters more than it might sound. Vendors are often more interested in securing the build contract than in determining whether the right thing is being built. An internal AI Facilitator running a structured Lab is the check on that dynamic. The vendor's expertise gets embedded. The organization's judgment stays in control.

✅ You avoid the Big Consulting trap

Large consulting firms are good at strategy. They will align your leadership, produce a well-structured AI roadmap, and deliver a presentation that looks authoritative and comprehensive. What they rarely do — because it is time-consuming, unglamorous, and outside their billing model — is go deep into the actual workflows. The messy, ground-level work of mapping how things actually get done, where value is actually lost, and what AI could actually change. That work is where real AI use cases come from. And it is precisely what the AI Facilitator does, as a full-time function, embedded in the organization, before every Lab.

The result is not a strategy deck that needs another six months of translation into something actionable. It is a decision, made by the right people, grounded in reality, ready to move.

✅ The Lab is future-proof. The technology isn't.

AI is moving fast. Models that were state of the art six months ago are already being replaced. Tools that organizations built workflows around are being disrupted by something better, cheaper, or more capable. Every bet on a specific technology carries the risk of obsolescence — and in AI, that risk materializes faster than in almost any other domain.

The AI Lab is immune to this. Not because it is disconnected from the technology, but because it is not a bet on any specific technology. It is a system for evaluating technology — for bringing the right experts into the room, experimenting with new models and solutions, and deciding what is worth building with whatever is available right now. When a better model arrives, the Lab processes it. When a new capability emerges, the Lab figures out where it applies. The exploration engine doesn't care what it's exploring. It just runs.

This reframes one of the most common objections to building an AI Lab: why invest in a system when everything is changing so fast? The answer is precisely because everything is changing so fast. Organizations that have invested in tools, vendors, or training programs tied to specific technologies will spend the next decade playing catch-up — retooling, retraining, and re-platforming every time the landscape shifts. Organizations that have invested in a structured system for deciding what to build will simply point that system at whatever comes next.

The technology will keep changing. The need to decide what to do with it won't.

✅ What gets measured gets built

One of the most common frustrations in enterprise AI is the inability to answer a simple question: what is this actually returning? Most organizations cannot answer it — not because AI isn't producing value, but because their AI activity is too fragmented to measure coherently. Pilots here, experiments there, tools adopted by some teams and ignored by others. No shared baseline. No consistent output. No way to connect investment to outcome.

The AI Lab solves this because it is not a black box. It produces metrics that leadership can track in real time — how many Labs have run, how many use cases were defined, how many were killed, how many moved to production, which business units are engaged, how long it takes from workshop to deployment. These numbers tell a story that no collection of pilots or experiments can tell: not "we are doing AI" but "here is our exploration engine, here is what it is producing, and here is where we are going next."

Leadership doesn't have to guess at the state of AI adoption. They can see it.

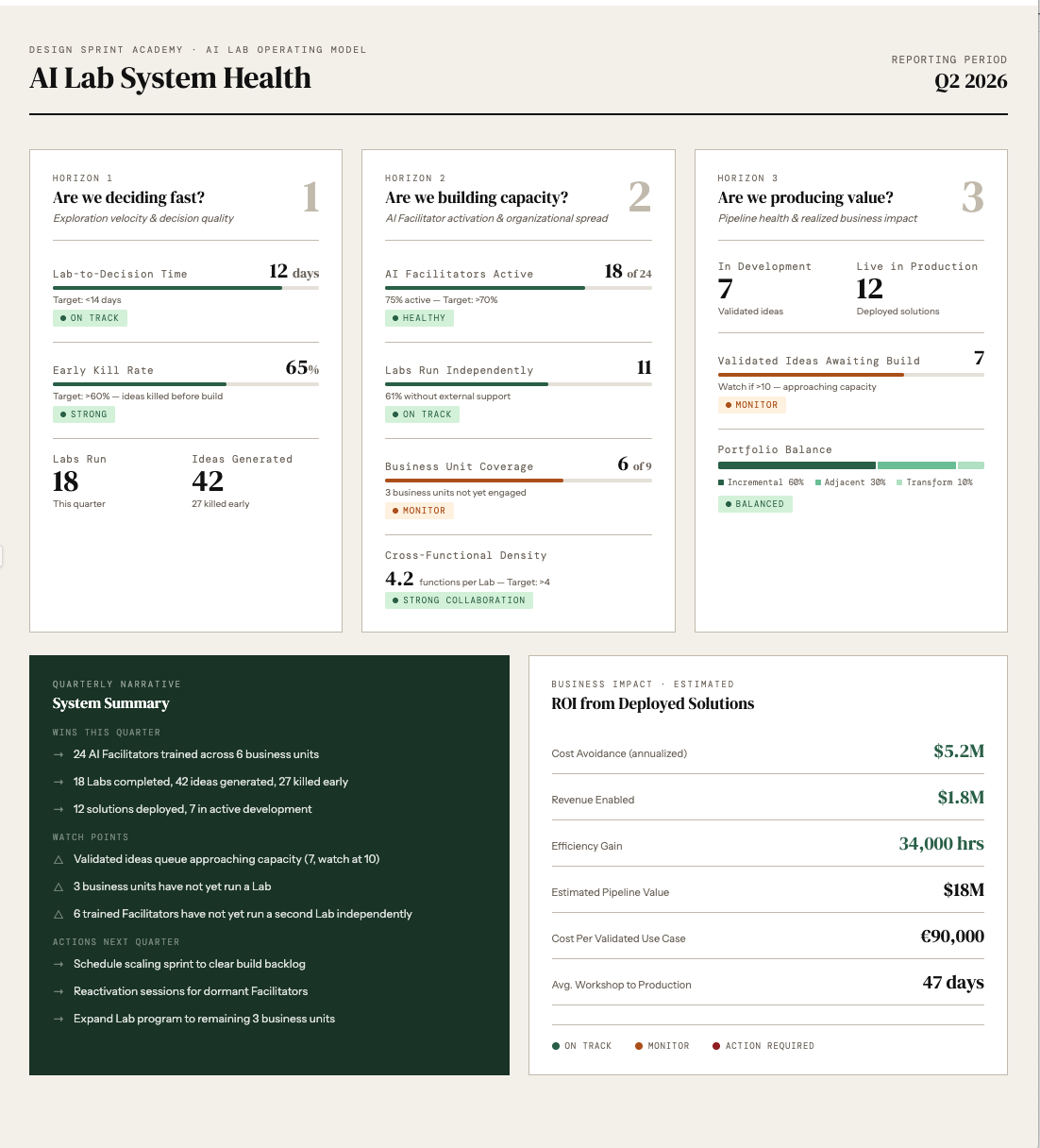

The dashboard that emerges from a running AI Lab tracks three horizons.

The first is exploration velocity: how fast are Labs running, how many ideas are being generated, and — critically — how many are being killed early. A kill rate above 60% is a sign of a healthy system, not a failing one. It means the Lab is doing its job: filtering out weak use cases before anyone commits serious resources to them.

The second horizon is internal capacity: how many AI Facilitators are trained and active, how many Labs are running without external support, and how many business units have been reached. This is the spread metric — the one that tells leadership whether the Lab is becoming organizational infrastructure or staying confined to one team.

The third horizon is where the CFO pays attention: business impact. Cost avoidance, revenue enabled, hours saved, and pipeline value from ideas currently in development. These numbers require honest estimation, not false precision. But they are estimable — and making them explicit, even conservatively, is what transforms the Lab from a cost center into a measurable investment.

There is one metric worth highlighting specifically because most organizations never calculate it: cost per validated use case.

A Discovery Pod of six to eight senior people, running for two to five days, represents a real investment — typically in the range of €70,000-90,000 fully costed when you account for people's time, facilitation, and preparation work. Not every Lab produces a validated use case — many ideas get killed, which is the point. So the true cost per validated use case, across a portfolio of Labs, sits around €90,000. That number sounds significant until you put it next to the alternative: a six-month pilot with a vendor, a dedicated build team, and a steering committee that meets monthly. By the time an organization discovers a pilot isn't working the conventional way, it has typically spent ten to twenty times that amount — and still has nothing in production. €90,000 to know — with evidence — whether something is worth building is not an expense. It is the cheapest decision a large organization can make

That visibility changes the conversation at the leadership level. AI stops being a cost center with uncertain returns and becomes a measurable pipeline with a clear operating model behind it. And that is what makes continued investment easy to justify — not faith in the technology, but evidence from the system.

The question everyone is asking is the wrong one

Every executive conversation about AI eventually arrives at the same question: how do we get more out of it? More ROI, more use cases in production, more proof that the investment is paying off.

It is the wrong question. Or rather, it is the right question asked too late — after the licenses have been purchased, the training programs have run, the pilots have launched, and the results have disappointed.

The right question is earlier. Not "how do we get more out of AI" but "do we have a system for deciding what to do with AI in the first place." A system that brings the right people together around the right problems. That produces decisions, not just ideas. That kills bad use cases early instead of six months into a pilot. That builds organizational knowledge with every Lab it runs. That gives leadership visibility into what is being explored, what has been validated, and what is moving toward production.

Most organizations don't have that system. They have tools, training, strategy decks, and good intentions. They have activity. What they don't have is a structured, repeatable way to decide what's worth building — and that gap is where most AI investment quietly disappears.

The AI Lab fills that gap. Not as a transformation program. Not as another layer of governance. As a parallel engine that runs alongside the business, produces decisions at speed, and compounds in value the longer it runs.

The technology is ready. The models are good enough. The tools are accessible. The only remaining question is whether your organization has a system for deciding what to build with them.

If the answer is not yet — that's where we start.

Work with Design Sprint Academy

We help organizations build their AI Lab from the ground up — starting with a first facilitated Lab, training internal AI Facilitators, and coaching teams through their first independent sessions until the capability is fully embedded.

If you're ready to move from AI activity to AI decisions, book a call with us and we'll show you exactly where to start.