Design Sprint Academy - What we are, and what we're not

Most leaders who reach out to us already have other options on the table. A workshop facilitator they’ve worked with before. A design agency their COO trusts. A large consultancy already in the organization for something else. And, sitting quietly in the background, the option to just run it internally with the team they already have.

Those options are all legitimate. Some of them are very good. We’ve seen strong work come from each of them in the right context, for the right kind of problem.

So before you decide whether to work with us, we want you to be able to answer two questions clearly for yourself: what exactly am I getting out of working with DSA? And is this what I want and value? This article is our honest answer.

What Design Sprint Academy is about

We help organizations build the internal capability they need to make high-stakes innovation and AI decisions.

That capability is built in three layers, each one a deeper version of the same core offer.

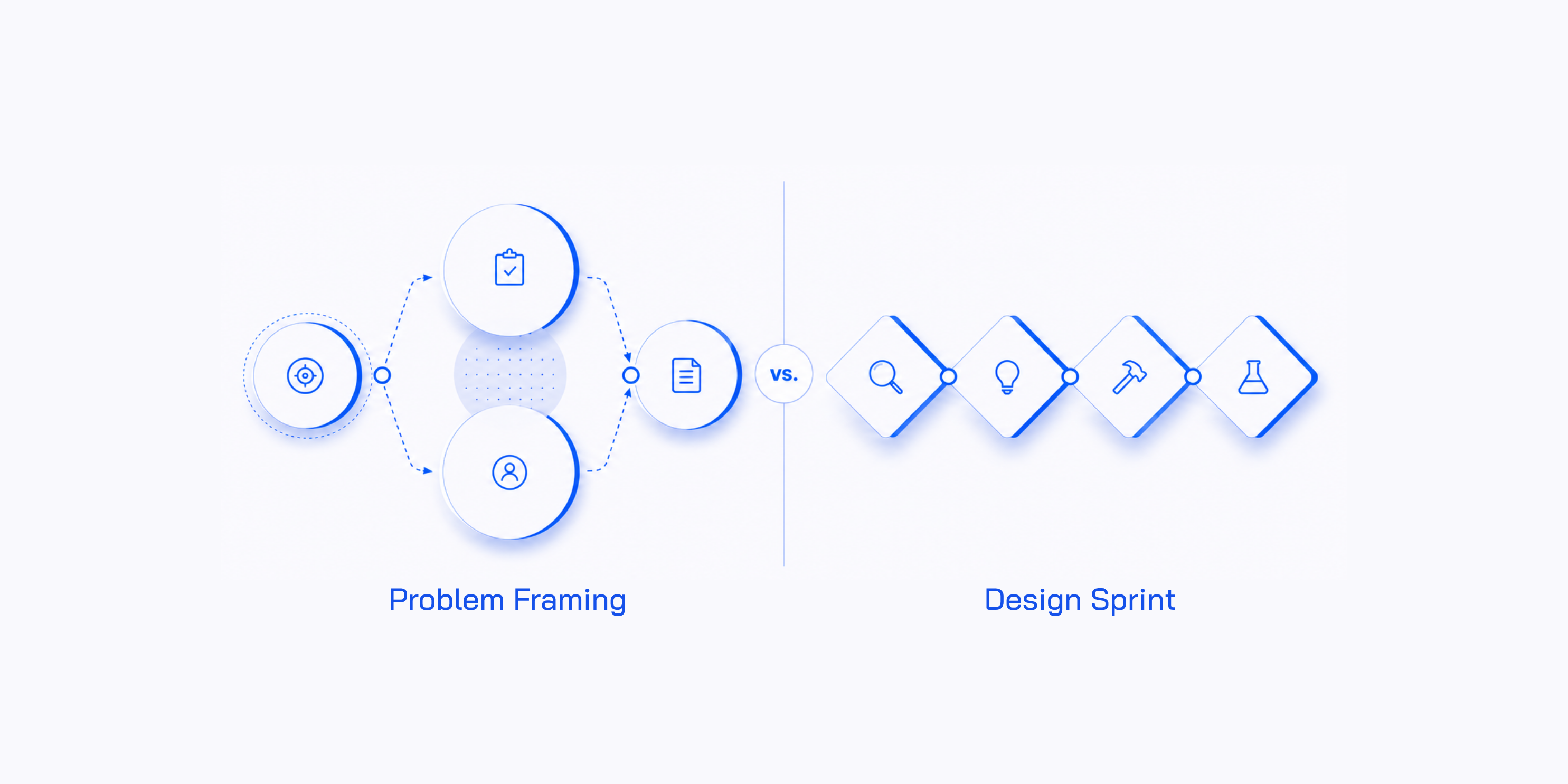

- Facilitated decision moments. Problem Framing, Design Sprints, AI Problem Framing, AI Workflow Sprints. Structured workshops that take a group of decision-makers from uncertainty to a clear, defensible decision in one to four days. This is usually how clients first experience our work — the point where the methodology proves itself inside their organization, on a real problem, with their own people.

- AI Labs. A repeatable internal system for deciding which AI ideas are worth building, validating them quickly, and killing the ones that aren’t. We install the Lab, run the first sessions ourselves, then hand it back. The Lab is the durable version of a facilitated session — a system the client owns that produces decisions on an ongoing cadence, not just once.

- AI Facilitator training. The deepest layer. We train the people inside an organization to run all of the above without us — the facilitators who will own the methodology, the AI Use Case Cards, the kill rate, the Sprint Brief, and the practice of producing decisions the rest of the organization trusts.

That’s the visible structure. Underneath it, what we actually sell is simpler: the capability to make better decisions, repeatedly.

Internally owned decision quality.

Not workshops. Not roadmaps. Not transformation programs.

We also want to be clear about what we are not. We are not in the business of running good-vibes-only workshops. We are not optimizing only for how the day feels in the room, how engaged the participants seem, or how energetic the post-workshop recap email sounds. Those things matter, and they are part of the experience, but they are not the full product. The product is rigor, decision quality, and a system the client can run independently. A session that felt great but produced a decision the team cannot defend three weeks later is, by our definition, a failed engagement.

That is why we do not run templated workshops. Every engagement is designed around the client’s actual context: their industry, their stakeholders, their political dynamics, the work that came before, and the work that needs to come after. We review existing research. We speak with the Decider beforehand. We design the artifacts the room will need. The training programs work the same way — the curriculum adapts to the cohort, the organization, and the kinds of decisions those facilitators will need to run inside their own company.

The same logic explains why the three layers are structured the way they are.

A facilitated session delivers decision quality once.

An AI Lab delivers it repeatedly, inside a system you own.

Training delivers it permanently, by building the people who will keep running both.

What you get if you’re trying to turn AI investment into AI in production

This is usually what the situation looks like: you’re one or two years into AI investment. There are pilots underway. There’s probably a Center of Excellence. The board is asking what the organization has to show for it, and the honest answer is a lot of scattered activity that hasn’t compounded into anything tangible.

We see this pattern often enough to have named it. Pilots running in parallel without a system for deciding what deserves investment, what should be killed, and what should be deferred. An internal AI team — often technically strong — making claims the business can’t easily interpret and decisions the rest of the organization doesn’t fully trust. A sophisticated operating model — matrix structures, embedded teams, value streams — creating the conditions for collaboration without guaranteeing the quality of the decisions made inside it.

The instinct, when this pattern shows up, is to add more of what isn’t working. Another round of training. Another pilot. Another consultancy. None of those address what is actually missing: a structured, repeatable layer that sits between AI strategy and AI execution, where cross-functional teams decide what is worth building before anyone builds it. That layer is where most AI investment quietly disappears — and where the work we do begins.

What you get from working with us in that situation is a discovery engine.

A system that produces decisions, not just a single decision. What we install is the AI Lab — a repeatable internal capability for deciding which AI ideas are worth building, validating them quickly, and killing the ones that aren’t. The Lab typically runs at a kill rate above 60%, and that ratio is where the system’s value becomes measurable. Every idea killed in a two-day decision moment is a six-month build that never had to happen.

A methodology your organization owns. Most AI engagements end with a roadmap and a thank-you. Ours ends with the methodology installed inside your organization, your AI Facilitators trained to run the next Lab without us, and a handoff that doesn’t depend on John or anyone else here personally. By the end, you own this. We step back. That is the opposite of how most consulting works — and it’s also why Turner Construction now runs four hundred internally built AI applications without us in the room.

Capability your organization will run for years. What an AI Investment Owner is really buying, in the end, is not a facilitated session or even a Lab installation — it is the people inside the organization who will keep running it. We train your AI Facilitators alongside the work, so by the time the engagement ends, the methodology lives inside your team. They run the next Lab. They onboard the next AI Discovery Pod. They produce, every quarter, the structured narrative you take to ELT — the use cases the system surfaced, the ones killed, the ones built, the kill rate, the capability now inside the organization. That is the artifact a board recognizes as governance rather than theater. And it is something you keep generating without us in the room.

What you get if you have a high-stakes decision to make and a deadline pressing on it

At the team or function level, the situation looks very different. You’re not leading an enterprise transformation. You’re trying to solve this — a strategic call your VP is pushing on, a misaligned room that needs to land in the same place by the end of the quarter, a product or market decision with real money attached that needs to be made well, and quickly.

You probably already have facilitators on staff. You may have run Design Sprints or Problem Framing before. The question is not whether the methodology works. It’s whether this specific session — with these stakeholders, this amount of political weight, and this much riding on the outcome — is one your internal team can run cleanly. Often, it isn’t. That’s why you’re reading this.

What you get from working with us in that situation is not a system. It’s one critical moment, delivered at a level your internal team may not be able to reach on its own.

An outsider who can ask the question nobody internal is free to ask. A senior internal facilitator with political stakes in the outcome can’t run the room cleanly, and the room can feel it. That dynamic deserves its own treatment, and we go into it later in the section on running this work internally. The short version: we arrive without history, without a stake in the outcome, and with permission to push on the issues internal facilitators often can’t.

A facilitator your team will respect. A senior, opinionated room can spot a generic facilitator in the first ten minutes. The session you are calling us about is one your team will judge harshly if it doesn’t feel built for them. We earn the room by arriving with the pre-work done — the research reviewed, the artifacts prepared, the Sprint Brief aligned with the Decider in advance — and by running a method that produces an outcome, not just a feeling. The day ends with a Problem Statement the Decider stands behind, a tested prototype, a yes-or-no readout from five customer interviews — not sticky notes and a vague list of actions.

A path to capability, not dependency. This follows the same pattern as our larger AI Lab work, just on a smaller scale. For some clients, the one session is the engagement — we run it well, hand over the artifacts, and step out. For most, the session is the start. They come back not because they need us to facilitate again, but because they want the methodology in their team. From there, the path is clear: training, a Facilitation Kit your team keeps, and optional advisory afterward. By the second or third engagement, your facilitators are running it themselves. The capability stays embedded even when the people who first learned it eventually move on — which is where most internal training breaks down. The next high-stakes moment doesn’t need to come back to us, and we’d argue it shouldn’t.

How we’re different from a workshop facilitator

There are excellent workshop facilitators in the world. Many of them are our friends. Some are part of our Certified Design Sprint Masters community. A few have run sessions for the same clients we’ve worked with, just on different problems.

The difference is in what’s actually being sold.

A workshop facilitator sells the room — their time, their facilitation skill, the experience of being in a well-run session. The output of that engagement is often how the day felt. That can be real and valuable. Some decisions do not need more than that.

We sell what comes out of the room. That is not just an artifact — a Problem Statement, an AI Use Case, a tested prototype, a build/pause/kill decision — although those matter. It is also the alignment around that artifact: the Decider standing behind the call, the team leaving with the same understanding of what was decided and why, and the room able to defend that decision to the rest of the organization without having to re-litigate it. The artifact has a defined structure and survives beyond the day. The alignment behind it is what makes it actionable. Without that, you have a neatly formatted document that no one acts on. With it, you have a decision the team can move forward with.

There is a workshop facilitator stereotype that has almost become a cliché: the person who sketches the agenda on a napkin during the flight to the client, arrives with a confident smile, runs the room well enough that everyone leaves feeling good, and flies home. That person exists. They are often charming and frequently effective. But the work they do and the work we do are not the same job, and the difference shows up in outcomes and in small operational details.

The biggest one is that we do not simply show up and hope the room produces magic. Before the session, we do the groundwork many facilitators skip — reviewing existing research, understanding the current context, previous attempts, the future the team is trying to reach, and building whatever artifacts the room will need in order to move quickly: customer journey maps, service blueprints, personas, minimum viable segment definitions, stakeholder maps, whatever the situation requires. The work that determines whether a session reaches alignment in the first hour or the last one happens before anyone enters the room.

From there, the in-room mechanics follow. We always have a named Decider. We always onboard both the team and the Decider before the session so we know we have a strong team, the right team, and a group capable of solving the challenge or making the decision in the room. We always require a Sprint Brief or equivalent, because it catches a significant share of alignment issues in advance. We always test with users on Day 4 of a Design Sprint, even when the team would rather not, because that test is the gate for decision quality.

If your problem genuinely only needs a well-facilitated conversation, hire a workshop facilitator. If your problem needs a defensible decision the team will actually act on without reopening the debate later, you need our system.

How we’re different from a design agency

Most design agencies run very good workshops. We share roots with a number of them — the same Jake Knapp lineage, the same affection for sticky notes, the same belief that concentrated time with a capable team can achieve more in a week than months of meetings — but the work we do inside those sprints is different from what most agencies are set up to do. There are three meaningful differences, and the first explains the others.

Design agencies are, by nature, designer-led. Their commercial center of gravity sits in the build phase — the design work, the prototyping, the implementation that follows the sprint. In that model, the Design Sprint is usually an entry point: a way to get in the door, demonstrate the team’s capability, and earn the larger design or build engagement that comes after. The sprint opens the relationship. It is not the business itself.

For us, the decision moment is the business. The Problem Framing session, the Design Sprint, the AI Workflow Sprint — these are not the prelude to a larger engagement. They are the engagement. We do not sell a build phase. We do not have a design studio waiting in the background. The decision the room produces is the product, which is why we put so much rigor into making sure that decision is sound. When the decision itself is the contract, you build a very different system around it than when the decision is simply a route into a larger piece of work.

The second difference is the kind of challenges we tackle. Most agencies use Design Sprints for product and UX problems — a startup testing a new feature, an internal team redesigning a user flow, a digital product trying to get to market faster. That work matters, and the original Design Sprint was built for exactly that. Our work sits one level higher. The challenges we work on usually involve cross-functional senior stakeholders making decisions about strategy, organizational design, AI investment, and capability — problems where the answer affects multiple business units, regulated processes, significant engineering commitments, or political dynamics inside the company. The complexity is not primarily about the user flow. It is organizational and strategic. And it shapes everything downstream, including our training programs, where we prepare people to step into senior stakeholder rooms with millions of euros of decision weight on the table and produce a defensible call. Different challenges, different stakes, different curriculum.

The third difference follows from the first two. We develop and upgrade the methods ourselves because this is all we do. When existing methods cannot reach a problem we are seeing in the rooms we run, we build a new one.

We created Problem Framing first, as a separate method to decide what is worth solving before a Design Sprint is used to validate how to solve it. We launched it at Google in 2018, when Google reached out to us to train their internal Design Sprint Academy. Their facilitators were running sprints beautifully, but the challenges entering those sprints were not always the right ones — because sprints solve problems, they don’t tell you which problem to solve. That missing upstream layer became Problem Framing. More than five thousand practitioners have come through the training since.

From there, we took the original 2016 Design Sprint and evolved it into Design Sprint 3.0, an updated format designed to address the gaps the original method showed when run inside larger organizations rather than startups.

Then, when the AI era arrived and the existing methods could no longer handle problems involving probabilistic systems, AI agents, and AI workflow redesign cleanly, we built two more: AI Problem Framing and AI Workflow Sprint.

Most design agencies run methods developed by someone else. We develop the methods ourselves.

How we’re different from a big consultancy

The large consulting firms — McKinsey, BCG, Bain, Accenture, Deloitte — sit in a different part of the innovation lifecycle than we do. The clearest way to understand the difference is this: we work at the front end — the point where a team is deciding what to build, what is worth investing in, and what needs to be validated first. The big firms tend to work further downstream, on transformation — reshaping a business, a function, or a technology stack at scale, often over the course of a year or more.

In AI, those lines can blur. Some of our clients already have a large consultancy involved — a strategy team helping identify use cases, or a transformation team building the platform. In those cases, the question is not whether to replace the firm. It is whether the work underway is actually producing decisions, or simply producing roadmaps that postpone them. From the outside, those two things can look very similar.

The big firms are not all identical. Some lean more heavily toward strategy and recommendations. Others lean toward execution and technology delivery. Most move between both, depending on the engagement. But they tend to share the same general shape: months-to-years engagements, large teams, partner-level relationships, and a deliverable that is either a strategic plan, a built system, or both. The label varies. The category of purchase is broadly the same.

We work much earlier in the process — days to weeks, not months to years. The output is a tested artifact, an aligned team, and a system the client can own from that point forward. Over time, the more durable output is the capability to keep doing this work without us.

All of these are valid investments, and the real question is which one fits the situation. A strategy firm tells you what to build — hire one if your board needs the credibility of a top-tier name to move on a major decision. A transformation firm builds it for you, often over years — hire one if you need a multi-year program built and operated by an external delivery team. We help you find out whether it should be built at all, and what to build first if the answer is yes — hire us if you need a working AI prototype validated with five real users by next month so you can decide whether to fund it, or a system inside your organization that can produce decisions like that repeatedly. Most large organizations need all three at different stages.

The mistake we see most often is using a months-long engagement to answer a days-long question — and ending up with a beautiful slide deck instead of a decision.

How we’re different from running it internally

If you already have a strong internal team — agile coaches, innovation managers, strategy consultants, UX or service designers, product managers, IT leads, design thinkers — you can absolutely run versions of our methods yourselves.

We are not the alternative to doing this internally. We are how organizations become capable of doing it internally.

That said, even with a strong team, there are still two reasons you may want us in the room.

The first is the facilitator’s dilemma. It is not that a skilled internal facilitator can’t see the right answer. They can. The problem is that they can’t take the risk of pushing the room toward it. They have political relationships in the room. They have a view on what the answer should be. They have something to lose if the decision lands one way rather than another. They will be in the building tomorrow, and the day after, and at the next quarterly review. Asking the hard question costs them something, and the room can feel the moment they hold back. An external facilitator can ask that question because we have nothing to lose by asking it. We arrive without history, and we leave without consequences. That makes us safer to use for the moments where the question that needs asking is one nobody internal is free to ask.

The second is focus. We live inside these methods. Running them, refining them, evolving them, training others in them — that is the work. Your internal team, by design, has a different job. They are trying to keep the organization moving, deliver the roadmap, hit the quarter, manage people, and work through the politics that come with all of that. Facilitating high-stakes decision moments is one responsibility among many, and rarely the one they are rewarded for most directly. We are more like a specialist restaurant with one menu perfected over time. Your internal team is the kitchen at home, where dinner still has to reach the table whether or not anyone has the time or energy to cook. Both matter. They are just not the same job. When the decision in front of you is one that will compound for years — a major AI investment, a strategic shift, an organizational redesign — bringing in the people who do only this, every day, is usually worth it.

If you have a senior internal team and the political room to use it, you can run versions of this work yourselves — and many of our clients do after we have trained their facilitators. The reason to bring us in for the moments that matter most is the same reason a senior surgeon does not operate on their own family: not because they are incapable of doing it, but because the room needs someone with no skin in the outcome and a method sharpened over hundreds of similar situations. Neutrality and methodology, not speed.

DSA vs. Other Options

How to know if we’re the right fit

There are situations where we are not the right choice. If what you need is a team bonding session, a culture or connection day, or an alignment workshop on a topic where the decision is already made, a good workshop facilitator is built for that work. If your board needs a polished strategy document, that is a different kind of purchase. If the problem genuinely requires a multi-year transformation built and operated by an external delivery team, that is a different kind of purchase too. Knowing which option fits the situation in front of you is the real work — and we would rather you make that decision clearly than choose us by default.

If you have read this far, you are probably the kind of person who takes decisions seriously. The kind who would rather pay for rigor than for theater, who would rather walk out of a room with something defensible than something pleasant, and who is trying to build something inside your organization that will outlast your tenure in it.

That is the client we want to work with. And that is the work we are trying to do. If any of this resonates, we should talk.