Why AI-first workflow thinking is the wrong starting point

Self-driving cars are no longer a wild idea. Waymo operates fleets in Phoenix, San Francisco, and Austin. The technology works. In the right conditions, autonomous vehicles navigate traffic, handle intersections, and complete journeys without a human behind the wheel.

But they only work in cities that have been specifically prepared for them.

Before a self-driving car can operate reliably, the city needs high-resolution maps of every street, precisely marked lanes, consistent signage, clearly defined geofenced zones. The car's intelligence depends on the environment being legible to a machine — explicit, consistent, free of the ambiguity that a human driver handles instinctively. Take the same vehicle to a city that hasn't been mapped and prepared, and it can't function. Not because the technology failed. Because the environment it needs to operate in doesn't yet exist.

The car is ready. The city has to be built for it first.

Most organisations are making exactly that mistake with AI right now.

The default approach to AI adoption has been to find a workflow that looks automatable, introduce AI into it, and see what happens. If the pilot looks promising, scale it.

The problem is that the workflow was never mapped before the AI arrived. Nobody in the room agreed on how it actually operated. The sequence of steps exists somewhere between the org chart and institutional memory — part documented, part assumed, part held in the heads of the people who've been doing the job for years. The AI gets introduced into that fog and is expected to perform.

And for a while, in controlled conditions, it does.

The pilot looks good. Then scaling begins, and the fog becomes a problem. The AI handles the steps everyone agreed on. It stumbles on the exceptions nobody documented. It automates a bottleneck in one place and creates a new one somewhere downstream. Adoption stalls. The numbers come back disappointing. The organisation concludes that the technology wasn't ready — when the real issue is that the workflow was never understood well enough to redesign it.

This is not a technology problem. It's a layout problem. The workflow was designed for humans — and nobody redesigned it before the AI arrived.

Why knowing your workflow and having it mapped are two different things

At this point a fair question: in what context would anyone not know their own workflow?

If you are the one doing the work, you know it. You've done it hundreds of times. You know which steps matter, where things slow down, what to do when something unexpected happens. That knowledge is real and it is valuable.

But knowing your workflow and having it mapped are two completely different things.

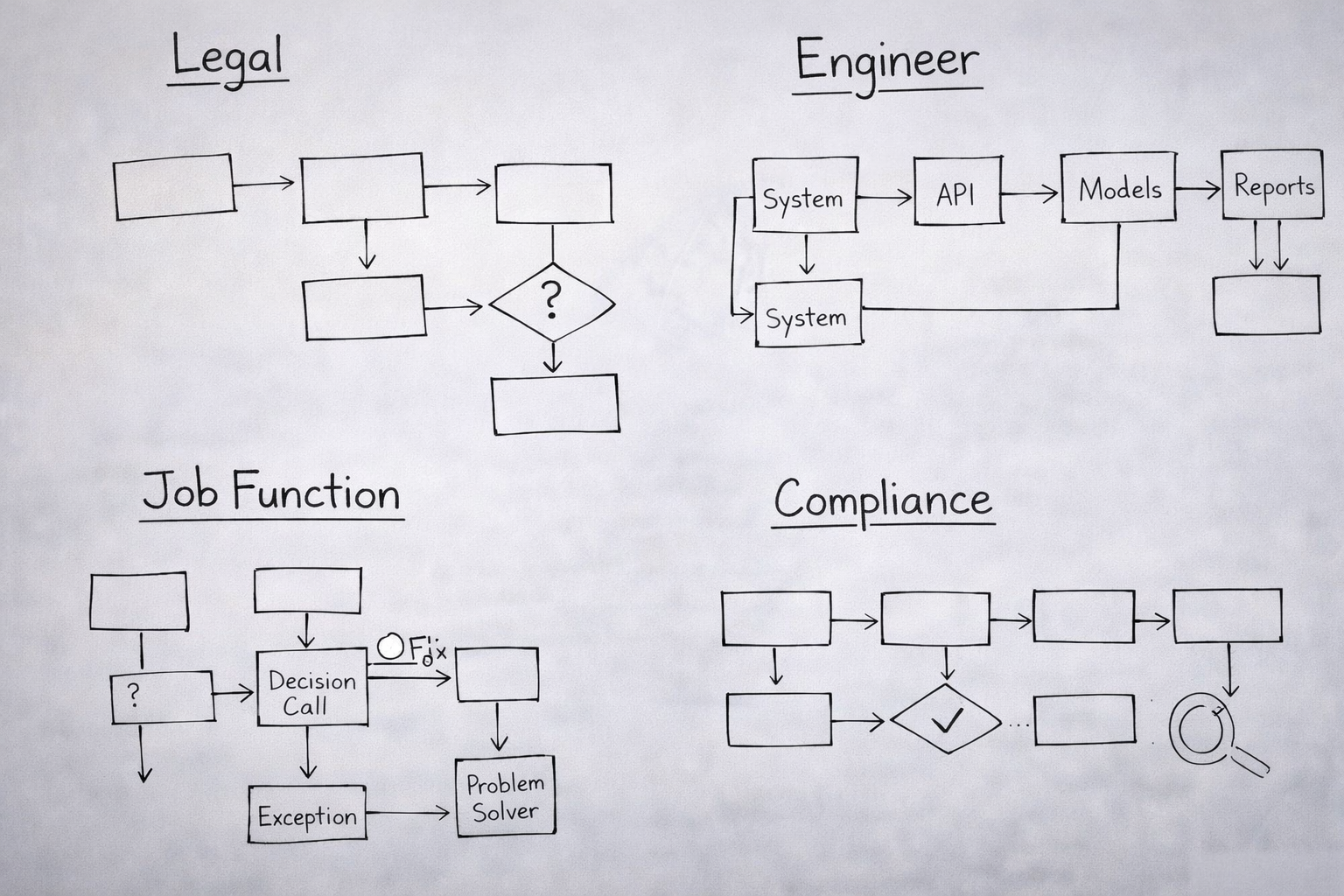

Does your legal team know it? Does your AI or ML engineer — the person who will be building the system that sits inside that workflow — know it? Does your compliance lead know which step involves a judgment call that currently sits inside one person's head and nowhere else? Does your data team know which inputs the workflow depends on that have never been formally documented?

When a cross-functional group sits down to redesign a workflow with AI, every person in that room carries a different version of how the work happens. The person doing the job sees it from the inside. The engineer sees it from the architecture. Legal sees it through risk. Leadership sees it through the reporting layer — which is often the furthest from reality of all.

None of those versions is wrong. None of them is complete. And you cannot introduce AI into a workflow that five different people understand five different ways and expect it to behave predictably.

The step most organizations skip

And it's the step that determines whether AI changes how work happens.

Before you decide what AI should do in a workflow, you need to know what that workflow actually is. Not what it's supposed to be. Not what it looks like in documentation written three years ago. What it actually is — today, as performed by the employees doing the work, with all its shortcuts and exceptions and informal dependencies and steps that only work because one person knows something nobody else does.

When a cross-functional team sits down to map a workflow together, something consistent happens: the room is surprised.

- There are steps that belong to nobody.

- Handoffs that only function because of one person's tribal knowledge.

- Decisions made on instinct because the logic was never written down.

- Bottlenecks that everyone accepted as permanent, when they're actually artifacts of a system decision made years ago that nobody revisited.

The map makes all of that visible. Only once the team has drawn reality do they redesign it — removing what's broken, simplifying what's unnecessarily complex, and only then identifying where AI creates genuine leverage.

That sequence — understand the work, redesign the work, then introduce AI — is what separates AI initiatives that produce operational change from AI initiatives that produce interesting pilots.

You cannot redesign what you haven't drawn.

The AI Workflow Sprint: built around the right sequence

.png)

The AI Workflow Sprint is a 4-day workshop for redesigning, building, and validating employee workflows around AI.

It brings together the people who understand the work with the people who understand AI — and gives them a structured process to answer one question: how should we operate differently because of AI?

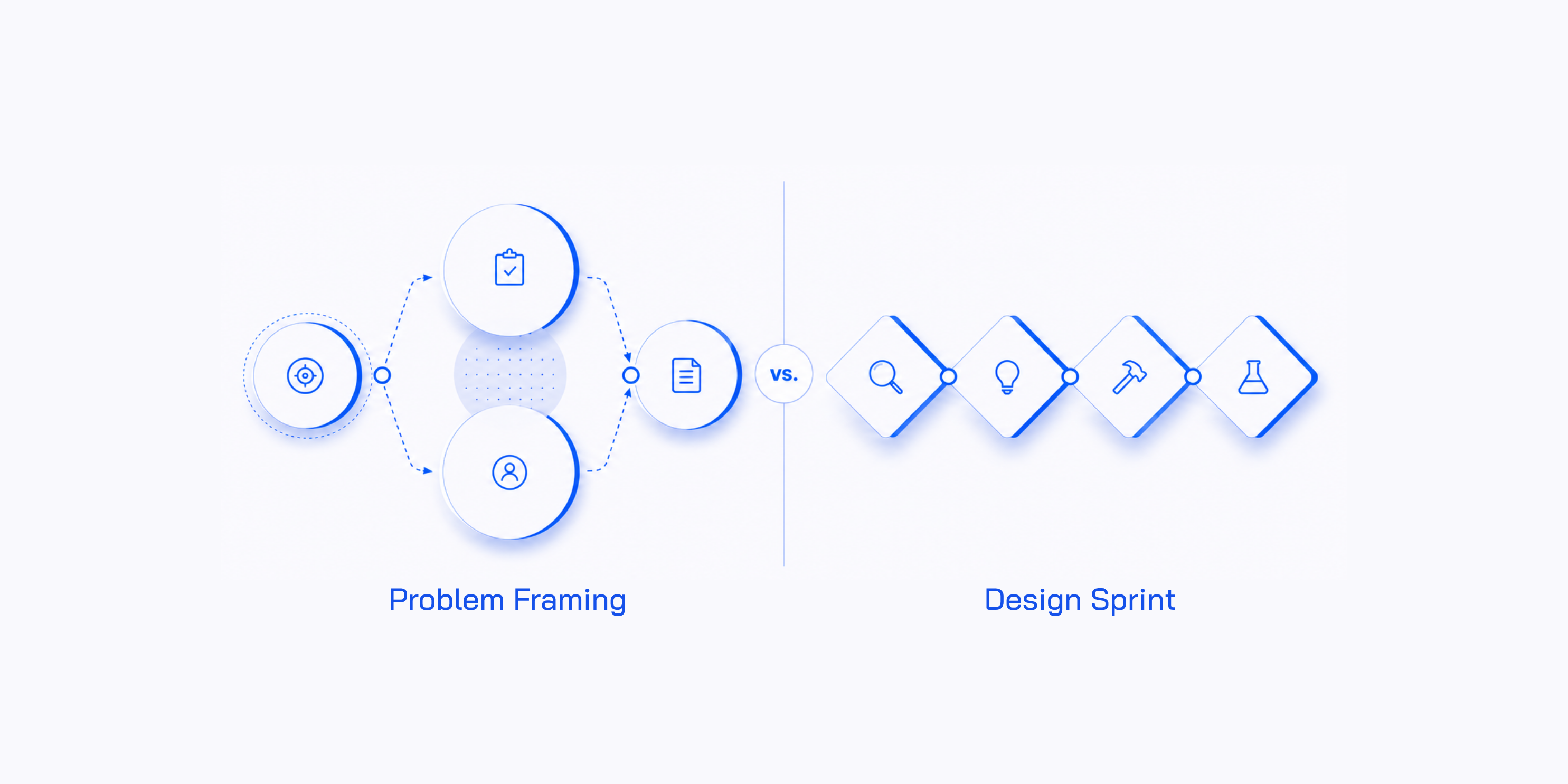

Before it begins, one thing has to exist: a validated use case — a specific workflow, a specific employee segment, and a business goal the initiative connects to. If that doesn't exist yet, an AI Problem Framing workshop produces it in a day. The sprint takes that decision and turns it into something testable.

Day 1 starts with the employee, not the technology. The cross-functional team — called the AI Discovery Pod — builds a shared picture of who they're designing for: their responsibilities, their pressures, where they struggle, what good performance looks like. Then they map the current workflow step by step: what the employee actually does at each stage, which systems they use, where the process breaks down, and where time and cost are being lost. This is the as-is map — not the intended workflow, but the real one. Every team that has done this has been surprised by what they found.

Only once the team has mapped reality do they redesign it. They identify where AI could assist, automate, or augment each step — and what should remain human-owned. The day ends with a single Sprint Focus: the one AI opportunity the entire build and test phase will be designed around.

Day 2 defines success before anyone builds anything. What does this initiative look like at full adoption in twelve months? What three metrics will prove it's working? What risks — across data, technology, compliance, adoption, and economics — could prevent it from working at scale? Then the team designs the solution: how the employee and the AI agent interact, what the AI produces at each step, what decision the human makes. The day ends with a complete blueprint for what will be built.

Day 3 is the build. A smaller AI Build Trio — an engineer who builds the agent logic, a designer who builds the interface, and a subject matter expert who validates that the AI outputs are actually accurate — turns the blueprint into a working AI Agent MVP. Not production-ready. Realistic enough for real employees to interact with honestly.

Day 4 is where assumptions meet reality. Five structured interviews with employees who perform this workflow every day. Three questions anchor every session: do they understand what the AI is showing them? Do they trust it enough to act on it? Does the redesigned workflow actually improve their work? The findings are synthesised and presented to the Decider, who makes one call based on interview evidence — not internal opinion.

- Scale. The evidence supports moving forward. Assign engineering resources. Set the first production milestone.

- Iterate. The concept has merit but something specific failed. Redesign that element and test again.

- Stop. The assumption was wrong, or the barrier is too significant. Do not proceed.

A Stop decision is not a failure. It is the process doing exactly what it was designed to do: surface the truth about a use case before the organisation commits months of engineering resources to building it. Every idea stopped after four structured days is a project that never needed to be explained to the board.

Self-driving cars work — but only in cities built to make them legible. The technology didn't wait for the environment. The environment had to be built for the technology first.

AI won't wait for your workflows either. It will be introduced — it already is being introduced — into processes that were designed for humans, that nobody has mapped, that five different people in the same organisation understand five different ways. And it will underperform, not because the models are weak, but because the environment it's operating in was never prepared for it.

The organisations that will have something real to show for their AI investment are the ones who do that preparation. Who map the workflow before the AI arrives. Who redesign the layout before the technology is introduced. Who make their processes legible — to each other, and to the systems they're about to build inside them.